My musings on Twitter are mostly a stream of poking fun of corporatist takes in The New York Times or The Economist. Every once in a while, for reasons that are impossible to understand, a tweet takes off, like this one, which mocked a far-fetched inflation theory propagated in a guest essay for the Times on July 8.

Under this theory, elevators and the elevator union are to blame for the housing affordability crisis. The most charitable interpretation, which the title of the piece nearly rules out, is that the high cost of elevators are emblematic of other supply problems exacerbated by onerous regulations. The tweet was retweeted over one thousand times. It seems that the progressive community took umbrage at the Times for breathing life into a YIMBY story that deflected attention away from the powerful companies actually setting rents and towards (largely powerless) elevator workers.

Some of the quote tweets were supportive, and some were not so kind. Matt Yglesias called me a “leftist professor” who “just resorts to bullying” his opponents, and even intimated that I was insensitive to the plight of the disabled community. (Perhaps he was miffed at a prior column.) Of course, the disabled care about access to elevators, but my tweet spoke to the price of housing, and profit-maximizing landlords should not, as a matter of economic theory, factor the fixed cost of elevators into their pricing decisions.

It would be nice for the Times to give some attention to an alternative and more plausible hypothesis behind the housing affordability crisis—namely, that hedge funds and private equity firms have been buying up properties that would otherwise go to households, creating an artificial scarcity in real estate markets, and thereby driving up rents. Matt Darling, who sports a globe emoji in his Twitter handle but is otherwise a decent fellow, questioned whether this alternative hypothesis was serious: “It seems unlikely to be a driving force – there are 146,375,000 houses in the United States. I’d be surprised if private equity buying ‘hundreds of thousands’ is a major contributor.” I promised him I would look into the matter. Here is what I found.

Investors Have Been Busy Gobbling Up Homes

In the first three months of 2024, investors bought 14.8 percent of homes sold according to Realtor.com. In some cities, such as Springfield, Kansas City, and St. Louis Missouri, investors purchased around one in five homes. Investor-owned homes hit their peak in December 2022, accounting for 28.7 percent of all home sales in America. Per MetLife Investment Management, institutional investors may control 40 percent of U.S. single-family rental homes by 2030.

Robert Reich posted a wonderful video to Twitter explaining how Wall Street investors could be driving up rents. He explains that home ownership—the primary vehicle for accumulating wealth—is out of reach for many Americans. Investors are not randomly making home purchases across the country, as Darling’s question above presumes, but instead are targeting bigger cities and neighborhoods that are homes to communities of color in particular. In one neighborhood in Charlotte, North Carolina, Wall Street investors bought half the homes that sold in 2021 and 2022.

Such clustering of properties is occurring in several U.S. cities. A report from Drexel’s Nowak Metro Finance Lab found that between 2020 and 2021, 19.3 percent of sales of single-family homes in Richmond, Virginia, went to investors. It found that investors bought nearly a quarter of the homes in Jacksonville, Florida in the same period.

But Are These Investments Enough to Raise Housing Prices?

Economists have recently begun to explore the relationship between institutional investment and home and rental prices.

- Researchers at the Federal Reserve Bank of St. Louis (2020) found that purchases by institutional investors, as measured by the share of properties owned by all institutional investors collectively in a Metropolitan Statistical Area, increase (1) the price-to-income ratio, especially in the bottom price-tier, the entry point for first-time buyers, and (2) the rent-to-income ratio generally, especially where the housing supply elasticity is high. By treating all institutional investors in the aggregate and thus as if it were owned a single entity, however, the St. Louis Fed study may have overlooked the incremental explanatory power of clustering properties by a single institutional owner in a given neighborhood.

- Watson and Ziv (2021) analyze the relationship between ownership concentration and rents in New York City, finding that a ten percent increase in concentration is correlated with a one percent increase in rents.

- Using a database comprised of all multifamily real estate transactions of greater than $2 million, Tapp and Peiser (2022) estimated the distribution of Herfindahl-Hirschman Indices across all Opportunity Zones within the United States, showing that investors have grown to consolidate a growing share of the affordable rental housing market.

- Linger, Singer and Tatos (2022) used a property tax data from the Florida Department of Revenue to calculate the individual market shares for owners of rental properties based on the number of units owned. For each Census Tract in the state, they calculate the consolidation of properties from 2015 through 2022, and then test whether such consolidation explains increases in rental prices or increases in rental inflation or both, controlling for other factors that might confound the concentration-inflation relationship. They find statistically and economically significant effects in both relationships.

- Using mergers of private-equity backed firms to isolate quasi-exogenous variation in concentration of ownership at the neighborhood level, Austin (2022) found that shocks to institutional ownership cause higher prices and rents.

- Coven (2023) estimated a demand system to study the effects of institutional investors’ conversion of large fractions of owner-occupied housing into rentals in the suburbs of U.S. cities. He finds that institutional investors decreased the housing available for owner-occupancy by 30 percent of the homes they converted, and their demand shock raised the price of housing purchased. He also found such behavior made it easier for renters to access neighborhoods that previously had few rentals.

A seminal lesson in industrial organization is that price coordination is easier, all things equal, when markets are concentrated. Indeed, merger enforcement is partially motivated by the prospect of coordinated pricing effects that flow a merger. So it shouldn’t surprise to anyone that, as institutional investors buy up the available stock of housing in a local market, housing prices rise.

An interesting development that might diminish the impact of clustering properties in a given neighborhood under a single roof, however, is the widespread adoption of pricing algorithms by third-party information aggregators. In March 2024, the Department of Justice opened a criminal investigation of RealPage, a top developer of property-pricing software. A class of renters as well as attorneys general from Washington, D.C. and Arizona brought lawsuits against the beleaguered software company. To the extent monopoly pricing can be achieved even by atomistic property owners via outsourcing the pricing decision to a third party, it might not be necessary to consolidate properties to exercise pricing power.

Policy Implications

In several European countries, such as Spain, Portugal and Greece, foreign investors were encouraged to buy property in exchange for a pathway to citizenship. The programs resulted in a flood of investment and speculation, causing rents to rise above what could be afforded by residents. The incentive plans have since been paired back, with countries hoping to re-direct investment into undeveloped pockets outside of the major cities.

The rather obvious economic lesson is that governments have an obligation to their voters, and free-market forces should not be allowed to price local residents out of their own neighborhoods. The same insight could be applied to domestic speculators in the United States.

In December 2023, Senator Merkley (D-Oregon) introduced the End Hedge Fund Control of American Homes Act, which would force large corporate owners to divest from their current holdings of single-family homes over ten years. Entities that fail to divest homes they own in excess of a 50-home cap would be taxed $50,000 for each excess home. And hedge funds would pay that fine if they own any homes at all after ten years.

Limiting the home ownership of hedge funds and other institutional investors makes economic sense, particularly in concentrated local real estate markets. Government funding of new housing projects also could address the imbalance between private supply and demand. Although it is generally unpopular among neoliberal economists and could weaken incentives to make further investments, capping rental inflation at five percent per year, as intimated by President Biden in this week’s NATO press conference, could also spell relief for renters. And pursuing common pricing algorithms under the antitrust laws could restore renters to the place they would have been absent the alleged price-fixing conspiracy, albeit with a significant lag, given the slow pace of antitrust.

All of these ideas are superior to focusing our energies on elevators. If only we could get the Times to listen.

Did you ever notice that the same neoliberal economists are quoted routinely by economics reporters in the mainstream press? Take Ken Rogoff. He guest authors pieces on public policy at Brookings, is a professor at Harvard, semi-frequently authors op-eds, and is widely quoted in the media. While not quite as high profile as his colleagues Jason Furman and Larry Summers, Rogoff has been extremely impactful.

To give you an idea, I have compiled some statistics on the number of times famous economists have been quoted in the New York Times and Wall Street Journal since January 2020.

| Economist | New York Times | Wall Street Journal |

| Jason Furman | 187 | 156 |

| Larry Summers | 153 | 164 |

| Angus Deaton | 50 | 12 |

| Joseph Stiglitz | 42 | 16 |

| Kenneth Rogoff | 20 | 34 |

| Isabella Weber | 8 | 6 |

As the table shows, Rogoff has been quoted 20 times in the New York Times since the start of the pandemic, trailing Nobel prize-winning progressives Angus Deaton and Joseph Stiglitz by only a small margin. Rogoff’s quotes in the more conservative Wall Street Journal exceeds these progressives, despite their international acclaim. (The table also shows the dependency of these papers of record on Furman and Summers, two Obama-appointed centrists—and disciples of deregulator extraordinaire Robert Rubin—who often reject the progressive policies of Biden.) In any event, the 34 quotes from the Wall Street Journal in a little over four years is an impressive display of influence.

In 2010, Rogoff co-wrote, with Carmen Reinhart, a paper titled “Growth in a Time of Debt” that came to define the acceptable boundaries of fiscal policy in the 2010s. And, while Rogoff has complained about being dismissed as an austerity-peddler, the fact remains that he and Reinhart became the go-to citation for governments when they slashed welfare spending and imposed sharp cost controls. The analysis that Rogoff and Reinhart (R&R) lean on was flawed from the start, however, and, for anyone without an Ivy League professorship, their oversight probably would have been career-ending. Despite efforts to substantiate his claims about the relationship between debt and growth rates in more recent work, huge methodological and theoretical issues remain. That Rogoff continues to be treated as a credible voice on economic issues is a striking indictment of our media ecosystem.

A Fundamentally Flawed Study

“Growth in a Time of Debt” was published to great fanfare when it came out with the financial crash of 2008 just barely in the rearview. The paper claimed to find a damning reason to pump the brakes on aggressive debt-financed government stimulus programs: When a country’s debt exceeds 90 percent of GDP, R&R asserted, its growth rate takes a massive hit, estimated at a drop of roughly three percentage points annually compared to countries below the cutoff—from 2.9 percent growth for countries with ratios between 30 and 90 percent to -0.1 percent growth for countries with ratios above 90 percent.

When a student and two professors at the University of Massachusetts—Thomas Herndon, Michael Ash, and Robert Pollin (HAP)—failed to reproduce those findings, they dug into the data and in 2013 found something else instead. R&R had made significant errors in their Excel sheet and sampling that inflated the number. R&R’s calculations excluded several years of data from New Zealand which, when included, lowered the difference in growth rates for countries above and below a 90 percent debt-to-GDP ratio from around three percentage points to just one percentage point. As noted above, R&R estimated real GDP growth to be -0.1 percent for countries with more than 90 percent debt-to-GDP; after correcting R&R’s inaccurate data, the UMass researchers found that the real figure was 2.2 percent. After corrections, the difference between real growth rates for countries above the 90 percent threshold, compared to countries with ratios below 90 percent, shrunk from three percentage points to one percentage point.

Rogoff and Reinhart did, in fairness, admit the error and correct it, making the same argument but with less dramatic figures. With other colleagues, they also produced more work that continued to show a similar trend. Even absent computational issues, however, there are still methodological issues and theoretical shortcomings that they never overcame.

For a start, R&R made a causal claim based on only correlational data, as several economists have pointed out. It could be the case that weaker growth leads to more government debt, rather than the reverse. Additionally, that R&R largely treated debt levels as a binary—either equal to or above 90 percent of GDP or below 90 percent of GDP—rather than a continuous variable could play a role. If they were to use debt levels as a continuous variable, they could model a relationship that reveals how each additional point of the debt-to-GDP ratio correlates to growth rates. Their method, however, merely sorted countries into two buckets: those with a “debt overhang” (their term, seemingly coined here, for when debt/GDP is greater than 90 percent) and those without. Then they more or less just took the averages (the averages were country-weighted in the original R&R).

R&R’s arbitrary 90 percent threshold is also worth discussing. For a start, the way that this threshold is determined is somewhat ambiguous, but it seems pertinent that when R&R published their most influential paper in 2010, that was a level that seemed to be fast approaching for many wealthy countries. Yet when HAP corrected the computational errors, the entire difference in growth rates was determined by the extreme outliers—that is, countries with debt-to-GDP ratios below 30 percent or above 120 percent. The UMass paper showed that, without those extreme outliers, there was no longer any strong correlation between debt and economic growth.

Another issue with the use of debt-to-GDP is that it does not account for government assets. Governments can raise revenue at any point by selling off ships, planes, tanks, land, buildings, and more or by selling intellectual property rights or exclusive leasing or permitting to companies. That there isn’t a serious effort to do so after countries hit that 90 percent threshold (or even go well past it) seems to indicate that in practice, the impacts of a debt overhang are preferable to taking extreme measures to stay below the red line.

Now R&R have insisted that they were never pushing austerity—and in fairness, they did include the caveat that fiscal stimulus should have been rolled back slowly. They never seemed to mind, however, that they were made famous by politicians like former Speaker of the House Paul Ryan and former British Chancellor George Osborne, who constantly cited R&R as a reason to impose austerity as rapidly as they could. In the wake of the controversy created by HAP’s 2013 debunking of “Growth in a Time of Debt,” journalist John Cassidy pointed out how R&R’s protestations in response to the austerity-pusher critiques completely clashed with the way R&R had marketed the paper when it was first published. Indeed, R&R were among 20 economists who publicly backed Osborne’s austerity policy in an open letter to The Sunday Times in February of 2010.

The revelation of terrible data management and the defensive response from R&R was enough for Cassidy to question whether listening to any economists at all was worthwhile. You would think, at the very least, economic reporters would discount R&R’s opinions on fiscal matters, but they haven’t even done that. For a mistake that would have likely derailed anyone with a less impressive pedigree, R&R have bounced back, still producing research, still pushing a (somewhat) toned down version of their argument from “Growth in the Time of Debt,” and still opining on economic policy in the media. Rogoff, in particular, is still quoted aggressively (as shown in the table above) and is using the new era of high interest rates to try and resurrect his old pet theory.

Old Wine in New Bottles

To be clear, unsustainably high levels of debt can be extremely problematic. But remember, that is not what R&R were saying. They were arguing that countries that experienced a debt overhang suffered long-term (economically significant) negative effects to their growth rates. Trying to use present circumstances to say, “See, we were right all along and all those people who decried our calls for fiscal responsibility were fools,” which is essentially what Rogoff has argued in a couple of op-eds this year, relies on a mischaracterization of both what R&R actually said and what their critics argued. In particular, Rogoff points to Adam Tooze using the word “austerity” over 100 times in his book Crashed. It is true that not all fiscal responsibility is austerity, and that not all austerity is fiscally responsible. But when Rogoff says things like Biden and Trump would both “blow up the debt,” he’s clearly hinting that mere “responsibility” is not all that he’s after.

After President Biden’s 2024 State of the Union address, Rogoff told Bloomberg that “Biden’s speech suggested blowing up the debt.” This is simply false, as Biden called for his policy proposals to be funded by higher taxes on the wealthy. Plus, this story ran after the president released his budget proposal, which includes cutting the deficit by $3 trillion annually. Maybe, Rogoff was interviewed before that, but anyone serious about advising policy as a neutral expert would have offered an updated statement. Would the debt continue to grow under Biden’s plan? Yes. Would it “blow up”? No.

And while Rogoff also asserts that Trump would likely blow up the budget, he includes the caveat that “we really have no idea what Donald Trump will do.” Anyone who creates this level of false equivalency between Biden and Trump on responsible budgeting is either oblivious or a total hack. Rogoff is known for Republican leanings; he advised John McCain in 2008 and reportedly “warmed up” to Trump after he took office.

Add it all up and we have a conservative economist who helped create a global push for austerity trying to resurrect that narrative, implying that Biden is no better than Trump on budget issues.

Rogoff’s legacy is one of creating cover for conservative governments to prematurely abandon fiscal stimulus, leaving millions of people out of work. What rocketed “Growth in a Time of Debt” to its high status among economists was how clear and dramatic it found the risk of high debt to be. That was proven to be bunk. But it was deeply rooted in the ethos of the austerity movement, so much so that the hawks at the Committee for a Responsible Federal Budget felt the need to defend their own position in the wake of the R&R controversy. Why is Rogoff still in reporters’ rolodexes?

Are the curtains closing on TikTok? The sudden arrival on stage of the TikTok Divest-or-Ban law would seem to indicate so. TikTok’s rivalrous understudies—especially Facebook and Google—wait impatiently in the wings, salivating over the prospect of capturing the company, its users, or, most tantalizing, its advertisers’ dollars.[1] But peek behind the curtain and you might see a highly stylized, kabuki theatre performance orchestrated by none other than Dark Brandon, President Biden’s no-nonsense alter-ego.[2]

To avoid getting swept off our feet with all the razzle-dazzle, let’s the run the “before they were stars” reel.

- 2017: TikTok was born when Chinese-based ByteDance bought US-based Musical.ly

- 2019: TikTok entered into a consent order with Trump’s Federal Trade Commission in connection with certain pre-2017 children’s privacy issues. In this settlement, TikTok agreed to be subject to a permanent injunction from a federal court, as well as future FTC oversight.[3]

- 2020: Trump’s Committee on Foreign Investment in the United States (CFIUS) recommended a ban, and Trump did that via an executive order. Multiple federal courts banned the bans on a variety of legal grounds, explicitly pointing to the lack of evidence.

- 2021: Biden revoked Trump’s executive order targeting TikTok and replaced it with a new order directing the Commerce Department to review apps with ties to foreign adversaries like China. Biden’s order emphasized a “rigorous, evidence-based analysis” to evaluate potential risks posed by apps like TikTok.

- 2022: TikTok launched Project Texas, the plan to cordon off TikTok’s functions involving access to US user data and content moderation decisions, isolating these from potential Chinese government interference, with extensive oversight and control by the American company Oracle, CFIUS, and additional independent auditors.

And that’s where things stood until the 2022 midterm elections, when Republicans won a slim majority in the House. That led to the 2023 creation of the House Select Committee on the Strategic Competition Between the United States and the Chinese Communist Party, a name definitely not ad-libbed by its chairman, Michael Gallagher (R-WI).[4] He is not a fan of Project Texas, the TikTok plan to resolve concerns about potential Chinese exploitation of Americans via the app (described above). Gallagher had seen this show many times before and knew the lines by heart—at the right moment, grab the spotlight and ban TikTok. Behind the scenes, he drafted a law that would accomplish just that. Or so he thought.

With great fanfare, on March 5, 2024, Gallagher introduced the “Protecting Americans from Foreign Adversary Controlled Applications Act,” braying that “TikTok’s time in the United States is over.”[5] The bill has a showstopping feature. He put the headliners—TikTok and ByteDance—in big, bright lights.[6] By explicitly naming them in the law, Gallagher avoided presidential hijinks—the law is self-effectuating, without any need to rely on Biden (given his apparent Project Texas acquiescence). Instead, the law imposes financial penalties on third-party gatekeepers (like Apple and Google app stores) to ensure they drop the platform like an aging starlet when the divest-or-ban deadline arrives. Gallagher’s visionary directorial choice seemed even more inspired when Trump, in a wild plot twist, vocally supported TikTok after the bill was unveiled. Trump’s stated explanation was that such a ban would benefit Facebook. Regardless of rationale, the law closes all loopholes for a president to slow or stop a TikTok ban post-enactment. Under the law, neither President Biden nor a possible President Trump have any mechanism or maneuver to settle or slow that ban. At least, that was the script.

To cut to the climactic moment, Gallagher’s bill was quickly passed by the House, went on ice in the Senate, was reincarnated in must-pass appropriation bill, and signed by the president. And, as expected, promptly challenged in court. But understanding the details of that legislative journey shows why this law will be rejected by the court—without much need to substantively consider the merits of the privacy or national security allegations. This is how Dark Brandon arrived on stage, operating in the shadows instead of the limelight, no doubt delightfully watching Gallagher hoist himself on his own petard with his overly clever and rushed legislating.[7]

Under a suspension of House rules choreographed by Speaker Johnson (R-LA), a procedural gambit usually reserved for uncontroversial bills, the House voted in favor of Gallagher’s bill on March 13, 2024, just eight days after its introduction.[8] During the intervening days, only one House hearing occurred: the House committee responsible for advancing the bill met on March 7—but in secret and under an unusual expedited rule that is rarely invoked. While secret sessions are usually transcribed, and could be made public, there is no mechanism to release them solely to a court considering a constitutional challenge to the law.[9] Two days before the full House vote, the committee introduced into the record a short document that is mostly comprised of citations to unverified news reports.[10] One day prior to the full vote, House members met in an “informal, confidential briefing” with national security officials—these briefings are never transcribed or recorded—and House members had contradictory reactions to the import of the shared information.[11]

After the bill passed the House, it went to the Senate, where astute observers expected it to languish before Senator Cantwell, in charge of the assigned Senatorial casting couch (Commerce Committee). Reading the same cue cards, Johnson and Gallagher plotted next steps. After five weeks, they had their blue script.[12] On April 18, a little over five weeks after the bill arrived in the Senate, Gallagher and Johnson cast it aside. The new “it girl” was the must-pass appropriations bill just received from the Senate, for $95 billion in aid to Ukraine and Israel, a matter near and dear to Democrats, as they well-knew. Gallagher tweaked the bill’s divestment timeline and Johnson attached Gallagher’s revised bill to the appropriations bill; in exchange, Biden and Cantwell made public statements that they would sign the appropriations bill with the TikTok ban.[13] The full House voted on April 20, with final passage on April 24. Per the statute, TikTok had 165 days to file a legal challenge (October) and 270 days to divest (January).[14]

Continuing the breakneck pace, TikTok filed an earlier-than-expected legal challenge on May 7, asserting violations of First Amendment, Bill of Attainder, and Fifth Amendment.[15] But, like going to the opera, you don’t need to understand the words to understand this play. All you need to know is that TikTok challenged the constitutionality of the law, and the only way the government can overcome those challenges is with sufficient evidence of national security danger.

Ah but there’s the rub. What is the evidence of national security danger? Let’s welcome to the stage with a big round of applause….. Dark Brandon! Following Gallagher-Johnson’s first misstep in explicitly naming TikTok in the law (thus squarely implicating constitutional rights),[16] the duo made a second misstep—a rushed enactment, leaving the record devoid of meaningful evidence with which to justify the law. On that empty stage, Dark Brandon alone decides what evidence (if any) to present.

Deference to Biden’s assessment of TikTok’s danger can hardly be what Gallagher envisioned. After all, avoiding that reliance was the driving impetus for the bill. Take an intermission to consider big-picture what evidence Dark Brandon is likely to submit in the absence of any meaningful House record. One presumes that the Biden administration does not possess robust evidence of national security danger, or this administration would have earlier pursued a ban. Even if such evidence exists, how likely is it that Dark Brandon will present it to the court; wouldn’t that only demonstrate that he failed to protect the public himself via CFIUS?[17]

This evidentiary misstep is joined with a third error, a flawed set design that Dark Brandon will use as a trap door to make the law disappear. The law has a weird framing for TikTok’s constitutional challenge, requiring the company to file its case in the DC Court of Appeals rather than the standard off-Broadway opening at a federal district court. Perhaps Gallagher perceived district judges as more likely to rule in TikTok’s favor; perhaps he was trying to deprive TikTok of forum selection.[18] Regardless, the statute’s jurisdictional mandate means no procedure exists for conducting discovery or presenting evidence.[19] Instead, the parties (and court) will play it by ear. They plan to submit evidence by attaching exhibits to their briefs, an indication the evidence will be minimal.[20] Moreover, Dark Brandon has indicated that he might not submit anything particularly sensitive, making no plans to litigate pursuant to the Classified Information Procedures Act.[21] He has indicated he might submit evidence under seal and on an ex parte basis—which means no one, not TikTok, not Gallagher, will ever know what that evidence is or whether it was weak.[22]

And finally, Gallagher’s not-ready-for-prime-time bill has yet-another flaw that Dark Brandon no doubt recognized would increase the likelihood of judicial invalidation. In his histrionic pursuit of TikTok, Gallagher insisted on an extremely short lead-up to the ban. He picked a date out of thin air, with no rhyme or reason. The date arrives so soon, even with the trivially enlarged time in the amended bill, that it is practically impossible for the company to divest. The court will perceive the “divest or ban” law as a pure ban, a staging that favors TikTok. In addition, a fast-approaching date translates into expedited briefing and decision-making.[23] In rushed proceedings, judges favor the status quo. That is particularly true here, where the relevant burdens of proof likely favor TikTok.

One final foreshadowing: Gallagher’s attempt to upstage the president with an eccentric judicial route failed to take into account who gets to call “cut” to end scene. Assuming TikTok prevails in the appellate court, the president alone decides whether to seek review by the Supreme Court.[24] It is unlikely that either Biden or Trump pursues an appeal to the Supreme Court, given that neither of them desires a TikTok ban on these terms. They will leave the law on the cutting room floor.[25] Facebook and Google will plot other ways to undermine TikTok’s success.

With deft maneuvering, Dark Brandon used Gallagher-Johnson’s lust for a TikTok ban against them. Without jeopardizing his own China hawk bona fides, he traded nothing (a TikTok ban that will die in court) for something (Ukraine/Israel aid), all the while maintaining strategic flexibility on numerous China topics and the ability to protect Americans from actual harm when and if it arises (via the CFIUS hammer).[26] A standing ovation for Dark Brandon!

Megan Gray is the founder of GrayMatters Law & Policy, a boutique firm focused on Information, Internet, Innovation, and Intangibles. Megan has worked as corporate counsel, litigator, and lobbyist for startups, established companies, non-profit organizations, individuals, and trade associations.

[1] “How Google, Meta and Snap’s battle with TikTok in short-form video is playing out,” https://digiday.com/marketing/how-google-meta-and-snaps-battle-with-tiktok-in-short-form-video-is-playing-out/. See also https://www.economist.com/business/2024/03/13/will-tiktok-still-exist-in-america (“If Americans redirect the roughly 3 trillion minutes of attention they lavished on TikTok last year to other apps already on their phones, Meta and Alphabet, the dominant duo in online advertising, will be the winners.”).

[2] Dark Brandon is a satirical anti-hero of President Biden that emerged as an internet meme in 2022. It portrays Biden as a powerful, no-nonsense leader who is not to be trifled with. On cue, President Biden joined TikTok on Feb. 12, 2024 with a Dark Brandon meme. On June 3, 2024, Trump also joined TikTok, further underscoring the lack of genuine security concerns with the platform.

[3] As the name indicates, this court order on TikTok’s privacy practices is permanent — it never expires. Interestingly, the Democrat commissioners explained in a concurring statement that they wanted to include TikTok’s officers in the consent order but the Republican commissioners would not agree.

[4] Conveniently for the theme of this essay, his wife is a Broadway actress.

[5] https://selectcommitteeontheccp.house.gov/media/press-releases/gallagher-bipartisan-coalition-introduce-legislation-protect-americans-0

[6] Division H, Section 2(g)(3)(A)(i) and (ii). The headings underscore the statute’s oddity, with the law dependent on the Definitions section to expressly categorize TikTok and ByteDance as “foreign adversary controlled application,” while excluding everything else, leaving that large universe for presidential evaluation similar to standard CFIUS review. Bafflingly, Gallagher did not include a “findings” section in the statute whereby Congress would assert its factual determinations and purposes that justify the law. See https://lawreview.uchicago.edu/print-archive/enacted-legislative-findings-and-purposes. Including Findings is commonplace and Gallagher gave no explanation for their absence. Courts often look to these Findings when assessing the constitutionality of a law, particularly when the connection to Congress’ power is not self-evident.

[7] People outside the DC bubble might wonder why Biden signed the bill if he was not in favor of it. Suffice to say that Biden wouldn’t win the election year Oscar by letting Gallagher-Johnson goad him into defending TikTok. While Biden certainly recognizes the severe harm that an enemy-controlled social network could inflict, he won’t win votes by explaining the constitutional limitations in preventing speculative harm via an information platform. Of note, some tea-leaf readers believe Biden signed the bill after rejecting TikTok’s idea to give the government the conductor’s wand to run the show (or end it, if desired). But rejecting a preposterous idea does not equate to a decision to shut down the platform. Vetting officers and evaluating terrabytes of content — in essence, running TikTok — would require staff and expertise well-outside the government’s ambit, and, more importantly, be cultural and political quicksand. https://www.washingtonpost.com/technology/2024/05/29/tiktok-cfius-proposal-rejected/; https://www.washingtonpost.com/technology/2023/03/07/tiktok-ban-senate-proposal/.

[8] https://www.congress.gov/bill/118th-congress/house-bill/7521/all-actions

[9] It’s unclear if anything significant occurred during the secret session apart from the vote roll call. https://crsreports.congress.gov/product/pdf/R/R42106. See House Rule 11, section 2(g)(1), https://budgetcounsel.com/laws-and-rules/%C2%A7361-house-rule-xi-procedures-of-committees-and-unfinished-business/

[10] The report is 18 pages, but stripping away everything except the purported evidence leaves less than a single page. https://www.congress.gov/congressional-report/118th-congress/house-report/417/1. It’s unclear what role, if any, this “legislative history” will play in the court case. Legislative history refers to the documents produced by Congress during the process of enacting a law. These documents can be used to help determine congressional intent or clarify ambiguous statutory language, but the intent and language in the TikTok ban are clear. The evidence in support of the ban, however, is not. Because the evidence is not part of the legislative history, the court may decline to consider it at all, or give it less weight because it was not directly considered by the legislators voting on the final bill.

[11] https://himes.house.gov/2024/3/himes-statement-on-protecting-americans-from-foreign-adversary-controlled-applications-act

[12] Standard operating procedure for screenwriting is to assign different colored paper to each new revised version of the script. The typical color sequence for script revisions is white (original script), blue revision, pink revisions, etc. https://en.wikipedia.org/wiki/Shooting_script

[13] Notably, Biden issued a press release on the day he signed the bill into law — but he did not mention TikTok at all, only about Ukraine/Israel. https://www.whitehouse.gov/briefing-room/presidential-actions/2024/04/24/bill-signed-h-r-815/; https://www.morningstar.com/news/marketwatch/20240418277/bill-that-could-lead-to-tiktok-ban-gets-potential-new-path-to-becoming-law-soon; https://www.congress.gov/bill/118th-congress/house-bill/815/all-actions

[14] Prior to the divest/ban date, the statute requires TikTok to build a special portability conduit to deliver user data to individual users.

[15] The First Amendment is of course about free speech. The Fifth Amendment is about due process. Under the Fifth Amendment, TikTok also alleged improper taking of private property without compensation. Fewer people are familiar with the constitutional prohibition on bills of attainder. Attainder is a legislative act that declares someone guilty of a crime and punishes them without a trial, imposing punishment on them, such as seizing property. Bills of attainder violate the constitutional principles of separation of powers by allowing the legislature to exercise judicial powers, and due process by punishing without trial (Constitution Article I, Section 9).

[16] Constitutional law nerds will appreciate that, by this devise, TikTok’s challenge is both “as applied” and “facial,” given the peculiar nature of the law, which namechecks TikTok for banning. This provides TikTok with an easier legal standard for review of its constitutional claims.

[17] In the future, if the government obtains sufficient evidence on the national security danger, the government will still have the ability to seek a ban via CFIUS, albeit perhaps on a different component of that authority than what Trump used.

[18] Another possibility is that Gallagher (a non-lawyer) opted for the appellate court as his “second choice” after learning that he couldn’t require TikTok to go straight to the Supreme Court. Congress cannot expand the Court’s original jurisdiction beyond what is stated in the Constitution. https://en.wikipedia.org/wiki/Original_jurisdiction_of_the_Supreme_Court_of_the_United_States

[19] As the parties noted in a court filing, the law’s designation of the appellate court as the court of original jurisdiction means that neither the Federal Rules of Civil Procedure nor the Rules of Appellate Procedure apply, and there is no underlying judicial or administrative record of evidence. Because they have creative license, the parties could ask for the appointment of a special master to oversee discovery, but neither did so. See https://storage.courtlistener.com/recap/gov.uscourts.cadc.40861/gov.uscourts.cadc.40861.1208624137.0.pdf

[20] The DC Court of Appeals doesn’t have a rule for page limits on exhibits attached to briefs, and no one knows what rules apply here in any event. That said, standard practice in other courts is less than 40 pages. https://www.cadc.uscourts.gov/internet/home.nsf/Content/VL%20-%20RPP%20-%20Circuit%20Rules/%24FILE/RulesFRAP20240401.pdf

[21] Expert Backgrounder: Secret Evidence in Public Trials Protecting defendants and national security under the Classified Information Procedures Act (CIPA), https://www.justsecurity.org/86812/secret-evidence-in-public-trials-protecting-defendants-and-national-security-under-the-classified-information-procedures-act/. Indeed, the government seems to contemplate nothing more than a plain-vanilla protective order for confidential business information to protect business interests. See Joint Motion for Stipulated Protective Order, https://www.courtlistener.com/docket/68506893/01208630251/tiktok-inc-v-merrick-garland/

[22] If the government submits secret evidence, TikTok will presumably double down on its due process violation claim.

[23] Briefing is scheduled to be complete by August 15, with oral argument on September 16.

[24] The wacky pathway that Gallagher imposed for legal challenges to the law raises the prospect that the Supreme Court could be deprived of jurisdiction to hear an appeal even if sought by a party. https://www.reuters.com/legal/transactional/column-no-judge-shopping-tiktok-2024-05-08/ Related, depending on when the appellate court issues its decision, Dark Brandon could also deprive the next president of the ability to seek Supreme Court review. For the Supreme Court to hear a case, a party must file a petition for writ of certiorari within 90 days of entry of the appellate court decision. The 90th day prior to January 20, 2025 (when Trump might be sworn in) is October 22, 2024.

[25] Gallagher’s swan song ends on a bitter note, with no encore — he has resigned from Congress.

[26] Tellingly, the Chinese government seems unperturbed by the new law, even sending new pandas to the DC zoo as part of a positive diplomatic upswing. https://en.wikipedia.org/wiki/Panda_diplomacy and https://www.axios.com/local/washington-dc/2024/05/29/giant-pandas-return-dc-national-zoo.

The Justice Department’s pending antitrust case against Google, in which the search giant is accused of illegally monopolizing the market for online search and related advertising, revealed the nature and extent of a revenue sharing agreement (“RSA”) between Google and Apple. Pursuant to the RSA, Apple gets 36 percent of advertising revenue from Google searches by Apple users—a figure that reached $20 billion in 2022. The RSA has not been investigated in the EU. This essay briefly recaps the EU law on remedies and explains why choice screens, the EU’s preferred approach, are the wrong remedy focused on the wrong problem. Restoring effective competition in search and related advertising requires (1) the dissolution of the RSA, (2) the fostering of suppressed publishers and independent advertisers, and (3) the use of an access remedy for competing search-engine-results providers.

EU Law on Remedies

EU law requires remedies to “bring infringements and their effects to an end.” In Commercial Solvents, the Commission power was held to “include an order to do certain acts or provide certain advantages which have been wrongfully withheld.”

The Commission team that dealt with the Microsoft case noted that a risk with righting a prohibition of the infringement was that “[i]n many cases, especially in network industries, the infringer could continue to reap the benefits of a past violation to the detriment of consumers. This is what remedies are intended to avoid.” An effective remedy puts the competitive position back as it was before the harm occurred, which requires three elements. First, the abusive conduct must be prohibited. Second, the harmful consequences must be eliminated. For example, in Lithuanian Railways, the railway tracks that had been taken away were required to be restored, restoring the pre-conduct competitive position. Third, the remedy must prevent repetition of the same conduct or conduct having an “equivalent effect.” The two main remedies are divestiture and prohibition orders.

The RSA Is Both a Horizontal and a Vertical Arrangement

In the 2017 Google Search (Shopping) case, Google was found to have abused its dominant position in search. In the DOJ’s pending search case, Google is also accused of monopolizing the market for search. In addition to revealing the contours of the RSA, the case revealed a broader coordination between Google and Apple. For example, discovery revealed there are monthly CEO-to-CEO meetings where the “vision is that we work as if we are one company.” Thus, the RSA serves as much more than a “default” setting—it is effectively an agreement not to compete.

Under the RSA, Apple gets a substantial cut of the revenue from searches by Apple users. Apple is paid to promote Google Search, with the payment funded by income generated from the sale of ads to Apple’s wealthy user base. That user base has higher disposable income than Android users, which makes it highly attractive to those advertising and selling products. Ads to Apple users are thought to generate 50 percent of ad spend but account for only 20 percent of all mobile users.

Compared to Apple’s other revenue sources, the scale of the payments made to Apple under the RSA is significant. It generates $20 billion in almost pure profit for Apple, which accounts for 15 to 20 percent of Apple’s net income. A payment this large and under this circumstance creates several incentives for Apple to cement Google’s dominance in search:

- Apple is incentivized to promote Google Search. This encompasses a form of product placement through which Apple is paid to promote and display Google’s search bar prominently on its products as the default. As promotion and display is itself a form of abuse, the treatment provides a discriminatory advantage to Google.

- Apple is incentivized to promote Google’s sales of search ads. To increase its own income, Apple has an incentive to ensure that Google Search ads are more successful than rival online ads in attracting advertisers. Because advertisers’ main concern is their return on their advertising spend, Google’s Search ads need to generate a higher return on advertising investment than rival online publishers.

- Apple is incentivized to introduce ad blockers. This is one of a series of interlocking steps in a staircase of abuses that block any player (other than Google) from using data derived from Apple users. Blocking the use of Apple user data by others increases the value of Google’s Search ads and Apple’s income from Apple’s high-end customers.

- Apple is incentivized to block third-party cookies and the advertising ID. This change was made in its Intelligent Tracking Prevention browser update in 2017 and in its App Tracking Transparency pop-up prompt update in 2020. Each step further limits the data available to competitors and drives ad revenue to Google search ads.

- Apple has a disincentive to build a competing search engine or allow other browsers on its devices to link to competing search engines or the Open Web. This is because the Open Web acts as a channel for advertising in competition with Google.

- Apple has a disincentive to invest in its browser engine (WebKit). This would allow users of the Open Web to see the latest videos and interesting formats for ads on websites. Apple sets the baseline for the web and underinvests in Safari to that end, preventing rival browsers such as Mozilla from installing its full Firefox Browser on Apple devices.

The RSA also gives Google an incentive to support Apple’s dominance in top end or “performance smartphones,” and to limit Android smartphone features, functions and prices in competition with Apple. In its Android Decision, the EU Commission found significant price differences between Google Android and iOS devices, while Google Search is the single largest source of traffic from iPhone users for over a decade.

Indeed, the Department of Justice pleadings in USA v. Apple show how Apple has sought to monopolize the market for performance smartphones via legal restrictions on app stores and by limiting technical interoperability between Apple’s system and others. The complaint lists Apple’s restrictions on messaging apps, smartwatches, and payments systems. However, it overlooks the restrictions on app stores from using Apple users’ data and how it sets the baseline for interoperating with the Open Web.

It is often thought that Apple is a devices business. On the contrary, the size of its RSA with Google means Apple’s business, in part, depends on income from advertising by Google using Apple’s user data. In reality, Apple is a data-harvesting business, and it has delegated the execution to Google’s ads system. Meanwhile, its own ads business is projected to rise to $13.7 billion by 2027. As such, the RSA deserves very close scrutiny in USA v. Apple, as it is an agreement between two companies operating in the same industry.

The Failures of Choice Screens

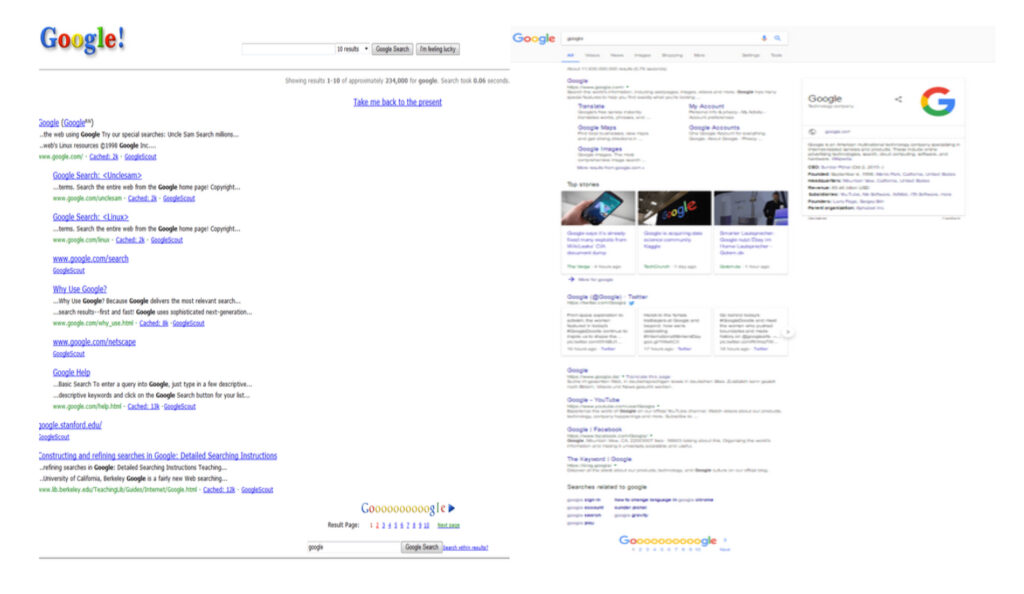

The EU Google (Search) abuse consisted in Google’s “positioning and display” of its own products over those of rivals on the results pages. Google’s underlying system is one that is optimized for promoting results by relevance to user query using a system based on Page Rank. It follows that promoting owned products over more relevant rivals requires work and effort. The Google Search Decision describes this abuse as being carried out by applying a relevance algorithm to determine ranking on the search engine results pages (“SERPs”). However, the algorithm did not apply to Google’s own products. As the figure below shows, Google’s SERP has over time filled up with own products and ads.

To remedy the abuse, the Decision compelled Google to adopt a “Choice Screen.” Yet this isn’t an obvious remedy to the impact on competitors that have been suppressed, out of sight and mind, for many years. The choice screen has a history in EU Commission decisions.

In 2009, the EU Commission identified the abuse Microsoft’s tying of its web browser to its Windows software. Other browsers were not shown to end users as alternatives. The basic lack of visibility of alternatives was the problem facing the end user and a choice screen was superficially attractive as a remedy, but it was not tested for efficacy. As Megan Grey observed in Tech Policy Press, “First, the Microsoft choice screen probably was irrelevant, given that no one noticed it was defunct for 14 months due to a software bug (Feb. 2011 through July 2012).” The Microsoft case is thus a very questionable precedent.

In its Google Android case, the European Commission found Google acted anticompetitively by tying Google Search and Google Chrome to other services and devices and required a choice screen presenting different options for browsers. It too has been shown to be ineffective. A CMA Report (2020) also identified failures in design choices and recognized that display and brand recognition are key factors to test for choice screen effectiveness.

Giving consumers a choice ought to be one of the most effective ways to remedy a reduction of choice. But a choice screen doesn’t provide choice of presentation and display of products in SERPs. Presentations are dependent on user interactions with pages. And Google’s knowledge of your search history, as well as your interactions with its products and pages, means it presents its pages in an attractive format. Google eventually changed the Choice Screen to reflect users top five choices by Member State. However, none of these factors related to the suppression of brands or competition, nor did it rectify the presentation and display’s effects on loss of variety and diversity in supply. Meanwhile, Google’s brand was enhanced from billions of user’s interactions with its products.

Moreover, choice screens have not prevented rival publishers, providers and content creators from being excluded from users’ view by a combination of Apple’s and Google’s actions. This has gone on for decades. Alternative channels for advertising by rival publishers are being squeezed out.

A Better Way Forward

As explained above, Apple helps Google target Apple users with ads and products in return for 36 percent of the ad revenue generated. Prohibiting that RSA would remove the parties’ incentives to reinforce each other’s market positions. Absent its share of Google search ads revenue, Apple may find reasons to build its own search engine or enhance its browser by investing in it in a way that would enable people to shop using the Open Web’s ad funded rivals. Apple may even advertise in competition with Google.

Next, courts should impose (and monitor) a mandatory access regime. Applied here, Google could be required to operate within its monopoly lane and run its relevance engine under public interest duties in “quarantine” on non-discriminatory terms. This proposal has been advanced by former White House advisor Tim Wu:

I guess the phrase I might use is quarantine, is you want to quarantine businesses, I guess, from others. And it’s less of a traditional antitrust kind of remedy, although it, obviously, in the ‘56 consent decree, which was out of an antitrust suit against AT&T, it can be a remedy. And the basic idea of it is, it’s explicitly distributional in its ideas. It wants more players in the ecosystem, in the economy. It’s almost like an ecosystem promoting a device, which is you say, okay, you know, you are the unquestioned master of this particular area of commerce. Maybe we’re talking about Amazon and it’s online shopping and other forms of e-commerce, or Google and search.

If the remedy to search abuse were to provide access to the underlying relevance engine, rivals could present and display products in any order they liked. New SERP businesses could then show relevant results at the top of pages and help consumers find useful information.

Businesses, such as Apple, could get access to Google’s relevance engine and simply provide the most relevant results, unpolluted by Google products. They could alternatively promote their own products and advertise other people’s products differently. End-users would be able to make informed choices based on different SERPs.

In many cases, the restoration of competition in advertising requires increased familiarity with the suppressed brand. Where competing publishers’ brands have been excluded, they must be promoted. Their lack of visibility can be rectified by boosting those harmed into rankings for equivalent periods of time to the duration of their suppression. This is like the remedies used for other forms of publication tort. In successful defamation claims, the offending publisher must publish the full judgment with the same presentation as the offending article and displayed as prominently as the offending article. But the harm here is not to individuals; instead, the harm redounds to alternative publishers and online advertising systems carrying competing ads.

In sum, the proper remedy is one that rectifies the brand damage from suppression and lack of visibility. Remedies need to address this issue and enable publishers to compete with Google as advertising outlets. Identifying a remedy that rectifies the suppression of relevance leads to the conclusion that competition between search-results-page businesses is needed. Competition can only be remedied if access is provided to the Google relevance engine. This is the only way to allow sufficient competitive pressure to reduce ad prices and provide consumer benefits going forward.

The authors are Chair Antitrust practice, Associate, and Paralegal, respectively, of Preiskel & Co LLP. They represent the Movement for an Open Web versus Google and Apple in EU/US and UK cases currently being brought by their respective authorities. They also represent Connexity in its claim against Google for damages and abuse of dominance in Search (Shopping).

Neoliberal columnist Matt Yglesias recently weighed into antitrust policy in Bloomberg, claiming falsely that the “hipsters” in charge of Biden’s antitrust agencies were abandoning consumers and the war on high prices. Yglesias thinks this deviation from consumer welfare makes for bad policy during our inflationary moment. I have a thread that explains all the things he got wrong. The purpose of this post, however, is to clarify how antitrust enforcement has changed under the current regime, and what it means to abandon antitrust’s consumer welfare standard as opposed to abandoning consumers.

Ever since the courts embraced Robert Bork’s demonstrably false revisionist history of antitrust’s goals, consumer welfare became antitrust’s lodestar, which meant that consumers sat atop antitrust’s hierarchy. Cases were pursued by agencies if and only if exclusionary conduct could be directly connected to higher prices or reduced output. This limitation severely neutered antitrust enforcement by design—with a two minor exceptions described below, there was not a single (standalone) monopolization case brought by the DOJ after U.S. v. Microsoft for over two decades—presumably because most harm in the modern (digital) age did not manifest in the form of higher prices for consumers. Under the Biden administration, the agencies are pursuing monopoly cases against Amazon, Apple, and Google, among others.

(For the antitrust nerds, the DOJ’s 2011 case against United Regional Health Care System included a Section 2 claim, but it was basically included to bolster a Section 1 claim. It can hardly be counted as a Section 2 case. And the DOJ’s 2015 case to block United’s alleged monopolization of takeoff and landing slots at Newark included a Section 2 claim. But these were just blips. Also the FTC pursued a Section 2 case prior to the Biden administration against Qualcomm in 2017.)

Even worse, if there was ever a perceived conflict between the welfare of consumers and the welfare of workers or merchants (or input providers generally), antitrust enforcers lost in court. The NCAA cases made clear that injury to college players derived from extracting wealth disproportionately created by predominantly Black athletes would be tolerated so long as viewers with a taste for amateurism were better off. And American Express stood for the principle that harms to merchants from anti-steering rules would be tolerated so long as generally wealthy Amex cardholders enjoyed more luxurious perks. (Patrons of Amex’s Centurian lounge can get free massages and Michelle Bernstein cuisine in the Miami Airport!) The consumer welfare standard was effectively a pro-monopoly policy, in the sense that it tolerated massive concentrations of economic power throughout the economy and firms deploying a surfeit of unfair and predatory tactics to extend and entrench their power.

Labor Theories of Harm in Merger Enforcement

In the consumer welfare era, which is now hopefully in our rear-view mirror, labor harms were not even on the agencies’ radars, particularly when it came to merger review. By freeing the agencies of having to construct price-based theories of harm to consumers, the so-called hipsters have unleashed a new wave of challenges, reinvigorating merger enforcement, particularly in labor markets. In October 2022, the DOJ stopped a merger of two book publishers on the theory that the combination would harm authors, an input provider in book production process. This was the first time in history that a merger was blocked solely on the basis of a harm to input providers.

And the DOJ’s complaint in the Live Nation/Ticketmaster merger spells out harms to, among other economic agents, musicians and comedians that flow from Live Nation’s alleged tying of its promotion services to access to its large amphitheaters. (Yglesias incorrectly asserted that DOJ’s complaint against Live Nation “is an example of the consumer-welfare approach to antitrust.” Oops.) The ostensible purpose of the tie-in is to extract a supra-competitive take rate from artists.

Not to be outdone, in two recent complaints, the FTC has identified harms to workers as a critical part of their case in opposition to a merger. In its February 2024 complaint, the FTC asserts, among other theories of harm, that for thousands of grocery store workers, Kroger’s proposed acquisition of Albertsons would immediately weaken competition for workers, putting downward pressure on wages. That the two supermarkets sometimes poach each other’s workers suggests that workers themselves could leverage one employer against the other. Yet the complaint focuses on the leverage of the unions when negotiating over collective bargaining agreements. If the two supermarkets were to combine, the complaint asserts, the union would lose leverage in its dealings with the merger parties over wages, benefits, and working conditions. Unions representing grocery workers would also lose leverage over threatened boycotts or strikes.

In its April 2024 complaint to block the combination of Tapestry and Capri, the FTC asserts, among other theories of harm, that the merger threatens to reduce wages and degrade working conditions for hourly workers in the affordable handbag industry. The complaint describes one episode in July 2021 in which Capri responded to a pubic commitment by Tapestry to pay workers at least $15 per hour with a $15 per hour commitment of its own. This labor-based theory of harm exists independently of the FTC’s consumer-based theory of harm.

Labor Theories of Harm Outside of Merger Enforcement

The agencies have also pursued no-poach agreements to protect workers. A no-poach agreement, as the name suggests, prevents one employer from “poaching” (or hiring away) a worker from its competitors. The agreements are not wage-fixing agreements per se, but instead are designed to limit labor mobility, which economists recognize is key to wage growth. In October 2022, a health care staffing company entered into a plea agreement with the DOJ, marking the Antitrust Division’s first successful prosecution of criminal charges in a labor-side antitrust case. The DOJ has tried three criminal no-poach cases to a jury, and in all three the defendants were acquitted. For example, in April 2023, a court ordered the acquittal of all defendants in a no-poach case involving the employment of aerospace engineers. (Disclosure: I am the plaintiffs’ expert in a related case brought by a class of aerospace engineers.) Despite these losses, AAG Jonathan Kanter is still committed as ever to addressing harms to labor with the antitrust laws.

And the FTC has promulgated a rule to bar non-compete agreements. Whereas a no-poach agreement governs the conduct among rival employers, a non-compete is an agreement between an employer and its workers. Like a no-poach, the non-compete is designed to limit labor mobility and thereby suppress wages. Having worked on a non-compete case for a class of MMA fighters against the UFC that dragged on for a decade, I can say with confidence (and experience) that a per se prohibition of non-competes is infinitely more efficient than subjecting these agreements to antitrust’s rule-of-reason standard. Again, this deviation from consumer welfare has proven controversial among neoliberals; even the Washington Post editorial board penned as essay on why high-wage workers earning over $100,000 per year should be exposed to such encumbrances.

Consumers Still Have a Cop on the Beat

If you take Yglesias’s depiction literally, it means that the antitrust agencies under Biden have abandoned the protection of consumers. But nothing can be further from the truth. Antitrust enforcers can walk and chew gum at the same time. The list of enforcement actions on behalf of consumers is too long to reproduce here, but to summarize a few recent highlights:

- FTC blocked Illumina’s acquisition of Grail on behalf of cancer patients;

- FTC induced Nvidia to call of its attempt to acquire Arm on behalf of direct and indirect purchasers of semiconducter chips;

- DOJ blocked a merger of JetBlue and Spirit to protect travelers;

- FTC persuaded a federal court to force generic drug maker Teva to delist five unlawful patent listings on asthma inhalers in the FDA’s Orange Book on behalf of asthma patients;

- FTC is pursuing Amazon for alleged subscription traps and dark patterns on behalf of subscribers of Amazon’s Prime video service; the FTC pursued fraud cases against firms, including Williams-Sonoma, for made in the USA claims;

- FTC took action against bill payment company Doxo for misleading consumers, tacking on millions in junk fees;

- DOJ has joined with multiple states to sue Apple for monopolizing smartphone markets on behalf of iPhone users;

- DOJ induced a subsidiary of Chiquita to abandon its proposed acquisition of Dole’s Fresh Vegetables division on behalf of vegetable consumers; and

- DOJ secured guilty pleas from two Michigan companies and two individuals for their roles in conspiracies to rig bids for asphalt paving services contracts in the State of Michigan.

Presumably Yglesias and his neoliberal clan have access to Google Search, Lina Khan’s Twitter handle, or the Antitrust Division’s press releases. It only takes a few keystrokes to learn of countless enforcement actions brought on behalf of consumers. Although this view is a bit jaded, one interpretation is that this crowd, epitomized by the Wall Street Journal editorial board and its 99 hit pieces against Chair Khan, uses the phrase “consumer welfare” as code for lax enforcement of antitrust law. In other words, what really upsets neoliberals (and libertarians) is not the abandonment of consumers, but instead any enforcement of antitrust law, particularly when it (1) deprives monopolists from expanding their monopolies to the betterment of their investors or (2) steers profits away from employers towards workers. In my darkest moments, I suspect that some target of an FTC or DOJ investigation funds neoliberal columnists and journals—looking at you, The Economist—to cook up consumer-welfare-based theories of how the agencies are doing it wrong. All such musings should be ignored, as the antitrust hipsters are alright.

There is a tension in the discourse as to the purpose of antitrust policy. In one camp, consumer welfare still reigns supreme. In another, there is greater acceptance that the consumer welfare standard is flawed, or at least controversial. Disciples of the first camp argue that antitrust policy should focus exclusively on increasing output as a proxy for consumer welfare.

Looking backwards, some have argued that the SCOTUS antitrust decisions focus almost entirely on output and price, consistent with consumer welfare. But is that how we should appraise what the Court was doing?

This short missive argues that SCOTUS does not articulate that it is applying consumer welfare. Even if it did, it does not tether that policy to notions of Congressional intent behind the antitrust laws. Indeed, where SCOTUS has said it is embracing Congressional intent, its opinion directly contradicts the notions of consumer welfare.

In a recent paper posted to SSRN titled “Antitrust’s Goals in the Federal Courts,” Herb Hovenkamp argues that to understand antitrust’s objective, we should focus on the words of SCOTUS and the federal courts: “Nearly all of this paper consists of statements from the Supreme Court and lower federal courts and concerns how they define and identify the goals of the antitrust laws.” It bears noting that Hovenkamp has been a strong advocate for consumer welfare theory, which would put him in the first camp. As Hovenkamp pointed out in a previous paper, “In sum, courts almost invariably apply a consumer welfare test.” And as Hovenkamp stated in yet another paper, there is good reason for the courts to do so: “it is a reasonable supposition that consumer welfare is maximized by offering consumers the best quality at the lowest price.”

While others have attempted to insert other policies into consumer welfare—or at least claim it is possible that other policies fit nicely within consumer welfare—the lodestar has always been output. As Hovenkamp professed: “[T]he country is best served by a more-or-less neoclassical antitrust policy with consumer welfare, or output maximization, as its guiding principle.”

Hovenkamp is correct that the courts have used the term consumer welfare. But the push for consumer welfare was not started in the Supreme Court, and the term has not been applied consistently in the way antitrust advocates of the consumer welfare standard might think.

Reading the tea leaves

The first mention of the words “consumer welfare” comes from U.S. v. Dotterweich, a case that sought to interpret the Federal Food, Drug and Cosmetics Act of 1938. The act sought to protect “against abuses of consumer welfare growing out of inadequacies in the Food and Drugs Act of June 30, 1906.”

It is not until 1976, in the Ninth Circuit’s case GTE Sylvania v. Cont’l T.V. Inc., that a court adopted the view that the purpose of antitrust was to protect consumer welfare. “Since the legislative intent underlying the Sherman Act had as its goal the promotion of consumer welfare, we decline blindly to condemn a business practice as illegal per se because it imposes a partial, though perhaps reasonable, limitation on intrabrand competition, when there is a significant possibility that its overall effect is to promote competition between brands.” The Court’s footnote 39 cites to Robert Bork’s 1966 piece. The notion of consumer welfare stayed in the Ninth Circuit for a few years, with Boddicker v. Arizona State Dental Ass’n and Moore v. James H. Matthews & Co.

In 1979, Chief Justice Burger wrote the Supreme Court’s decision in Sonotone. In that case, it appears Burger adopts Bork’s consumer welfare approach. But a careful reading of the full paragraph in which Burger cites Bork leaves that prescription uncertain:

Nothing in the legislative history of § 4 conflicts with our holding today. Many courts and commentators have observed that the respective legislative histories of § 4 of the Clayton Act and § 7 of the Sherman Act, its predecessor, shed no light on Congress’ original understanding of the terms “business or property.”4 Nowhere in the legislative record is specific reference made to the intended scope of those terms. Respondents engage in speculation in arguing that the substitution of the terms “business or property” for the broader language originally proposed by Senator Sherman5 was clearly intended to exclude pecuniary injuries suffered by those who purchase goods and services at retail for personal use. None of the subsequent floor debates reflect any such intent. On the contrary, they suggest that Congress designed the Sherman Act as a “consumer welfare prescription.” R. Bork, The Antitrust Paradox 66 (1978). Certainly, the leading proponents of the legislation perceived the treble-damages remedy of what is now § 4 as a means of protecting consumers from overcharges resulting from price fixing. E.g., 21 Cong.Rec. 2457, 2460, 2558 (1890). [emphasis added]

From there, the lower courts either cited Sonotone, Bork, the Merger Guidelines, or, in one case, Broadcast Music, which did not mention consumer welfare at all.

It was not until Jefferson Parish that the Court again mentions consumer welfare, but only in a concurrence by Justice O’Conner (again citing Broadcast Music). Justice O’Conner wrote: “Dr. Hyde, who competes with the Roux anesthesiologists, and other hospitals in the area, who compete with East Jefferson, may have grounds to complain that the exclusive contract stifles horizontal competition and therefore has an adverse, albeit indirect, impact on consumer welfare even if it is not a tie.” And in the same year, the Court in NCAA v. Board of Oklahoma again quoted Sonotone.

The words appear again in Atl. Richfield Co. v. USA Petroleum Co. in a dissent by Justice Stevens. But here the words are used in contradiction to notions of efficiency. Justice Stevens writes: “The Court, in its haste to excuse illegal behavior in the name of efficiency, has cast aside a century of understanding that our antitrust laws are designed to safeguard more than efficiency and consumer welfare, and that private actions not only compensate the injured, but also deter wrongdoers.” (emphasis added) The line suggests that the purpose of antitrust laws goes beyond short-run welfare maximization.

In his dissent in Eastman Kodak, Justice Scalia accuses the majority of ignoring consumer welfare in application of a per se rule against tying. Similarly, Justice O’Conner, citing consumer welfare, accuses the majority in Edenfield v. Zane of “taking a wrong turn” in areas of speech.

In FCC v. Beach Comm’n Inc., the Court wrestled with an FCC franchising requirement. In explaining its understanding of the purpose of antitrust laws, the Court mentions consumer welfare in a way potentially inconsistent with Bork’s treatment: “Furthermore, small size is only one plausible ownership-related factor contributing to consumer welfare. Subscriber influence is another.” These are not necessarily output- or price-related goals.

In the 1990s, there are two cases in which SCOTUS mentions consumer welfare. In Brooke Group v. Brown & Williamson Tobacco, the Court talks of its precedent, Utah Pie, in terms of how the case has “been criticized on the grounds that such low standards of competitive injury are at odds with the antitrust laws’ traditional concern for consumer welfare and price competition.” It does not, however, explain the meaning of consumer welfare. It merely quotes the usual Chicago School authors as to the point and moves on.

In the 2000s, consumer welfare became more prevalent in SCOTUS discussion, but again without explaining its meaning. Justice Stevens dissents in Granholm v. Heald against the removal of state wine restrictions because of Constitutional concerns. The Court in Weyerhauser notes that without recoupment, predatory pricing improves consumer welfare. Leegin, for all of its careful consideration of overturning Dr. Miles, mentions consumer welfare only three times, once quoting an Amicus brief. In Kirtsaeng, it quotes Hovenkamp in passing for that proposition. In Alston, the Court cautions that judges in implementing a remedy may affect outcomes worse than the market. In a maritime tort case, the dissent warned that overwarning regarding contaminants would injure consumer welfare. In Ohio v. American Express, the Court favorably cites to Leegin for the notion of consumer welfare, but only in passing. In Actavis, too, the dissent points to consumer welfare.

That is the extent of the Supreme Court’s wisdom on consumer welfare. For nearly 100 years, the phrase “consumer welfare” did not appear anywhere in antitrust lore. It did appear elsewhere, a point with which we must contend if the Court knew of term’s existence. Moreover, the Court has inconsistently used the term (within and beyond the antitrust laws), which suggests more haphazard citation than deliberate calculation. Or, perhaps more insidiously, an attempt to alter precedent via seemingly innocent citation leads to its increased usage in antitrust.

Put one shoe on before the other

In contrast to the obscure tea leaves from the aforementioned cases, the Court made a very precise pronouncement as to the purpose of antitrust in 1962. In Brown Shoe v. United States, the Court details the legislative history of the antitrust laws. The Court makes clear it is interpreting legislative history and the will of Congress, not creating its own policy:

In the light of this extensive legislative attention to the measure, and the broad, general language finally selected by Congress for the expression of its will, we think it appropriate to review the history of the amended Act in determining whether the judgment of the court below was consistent with the intent of the legislature.

That legislative history does not detail consumer welfare, and indeed it could not given the passage of the Sherman Act in 1890 and Alfred Marshall’s book, Principles of Economics, published in the same year. Looking backwards—from current understanding and implicitly thrusting that understanding on courts of yesteryear—is a problematic bias of this approach.

The Supreme Court goes on to note other aims of antitrust. It notes a focus on the rising tide of economic concentration. It even mentions some potential defenses, such as two small firms merging or a failing firm:

[A]t the same time that it sought to create an effective tool for preventing all mergers having demonstrable anti-competitive effects, Congress recognized the stimulation to competition that might flow from particular mergers. When concern as to the Act’s breadth was expressed, supporters of the amendments indicated that it would not impede, for example, a merger between two small companies to enable the combination to compete more effectively with larger corporations dominating the relevant market, nor a merger between a corporation which is financially healthy and a failing one which no longer can be a vital competitive factor in the market.

But it fails to mention other goals, including consumer welfare. And it explicitly rejects an efficiencies defense. Indeed, SCOTUS recognized that the goals of antitrust law may contradict expansions of output:

It is competition, not competitors, which the Act protects. But we cannot fail to recognize Congress’ desire to promote competition through the protection of viable, small, locally owned business. Congress appreciated that occasional higher costs and prices might result from the maintenance of fragmented industries and markets. It resolved these competing considerations in favor of decentralization. We must give effect to that decision.

In other words, prices might be higher and output lower when markets are less concentrated, but that is a price we are willing to pay in exchange for greater democracy and greater freedom from economic tyranny.

Looking backwards yields more heat than light

What all of this suggests is a strong movement and perhaps some misunderstandings by the Court about what consumer welfare means. Hovenkamp is right to be skeptical given, as he points out, SCOTUS does not often use the term. But it’s worse than that.

Where the trouble comes in is when Hovenkamp starts looking for output and price discussions as a proxy for consumer welfare. Here, he finds slightly more support in the tea leaves. But my critique of those considerations, beyond the points that overlap here, will have to wait for another blog post. At the very least, suffice it to say: If we’re using output as a measure of welfare, output holds the same problems as have been repeatedly stated as to consumer welfare. And if output is a not a proxy for consumer welfare, then why are we measuring it again?