“I realized that ‘success’ for nearly all tech platforms meant things like maximizing the amount of time you spend with their product, keeping you scrolling as much as possible, or showing you as many ads as they can.”

— former Google employee, James Williams, explaining why he quit the company years ago

Suppliers owe a duty of care to their consumers. The duty of care applies to ensure every product and service does not cause harm. The supplier of a carbonated drink, a food producer, and a social media platform are all subject to the duty of care.

Manufacturers owe a duty to design products that do not pose foreseeable risks of harm. A defect in design occurs when the product falls below the standard of a reasonable manufacturer in ensuring safety and reliability. Manufacturers also have a duty to warn users of foreseeable risks associated with their products. This duty may overlap with statutory safety obligations under product safety regulations and consumer protection laws. Consumers have a legal right to expect that products they use, or purchase, will not cause them harm.

Social media addiction

Humans are social animals, wired to pursue experiences including friendly interactions in exchange for a reward of a dopamine release in our brain, which pushes us to repeat behaviours. On top of that, behaviours can be acquired through the association between an environmental stimulus and a naturally occurring one. Pairing a product with a naturally occurring stimuli such as catchy music or beautiful scenery can elicit a positive emotional response towards the product. The design of social media platforms, and the evolving algorithms, is rooted in user engagement. Someone posts a photo on Instagram and gets “likes” in return. This reward hacks the brain’s social system where positive strokes are part of community reinforcement, and social standing that causes them to post again, add more “friends,”, like their friends’ posts, and so on, in a spiral of reinforcement. The reinforcement generates feelings of wanting to keep looking at the feed. Those feelings, particularly the feeling of “missing out” when not regularly checking the feed, is what the platforms use to ensure user engagement and return, creating an ideal opportunity to promote, target, advertise and sell products to people.

A positive reinforcement such as the like button on social media platforms is a reward, encouraging behaviour to generate attention. Psychologists call the type of learning in which voluntary behaviours are shaped by reinforcements “operant conditioning,” essentially a reinforcement mechanism that can increase specific “positive” behaviours.

Consumers are not consciously aware this training is taking place, nor are they aware of the risks, nor have they had the consequences brought to their attention by way of clear notices or warnings in language that they understand. Facebook, Instagram, TikTok, YouTube among other platforms exploit human behavior, compete for consumer attention, and, as KGM v Meta et al. and New Mexico v Meta proved, breach statutory duty and are liable for negligence and negligence to warn.

Some studies have shown that people with frequent and problematic social media use can experience changes in brain structure similar to changes seen in individuals with substance use or gambling addictions. The reinforcement of behaviour to return to social media is creating a type of psychological and chemical addiction that platforms dismiss as “engagement.” The addiction of users to social media, particularly of young adults and children whose lifespan lays ahead, is beneficial for platforms who need to gather and sell data for advertising.

Children are most at risk

In addition to the concern that addictive-by-design algorithms are harmful to society in general, our children are most at risk.

In the United States, studies show that up to 95 percent of youth ages 13-17 use a social media platform, and almost 40 percent of children ages 8-12 use social media. From a young age, children and teens have been and continue to be exposed to to inappropriate content on social media that relates to suicide, bullying, exposure to nudity, sexual, and violent content.

Children may be also exposed more than adults to illegal and inappropriate content including misinformation, bullying and contact with predators; teens are exposed to three times more nudity than adults on social media.

Between ages 10 and 19, children undergo a highly sensitive period of brain development; risk-taking behaviours reach their peak, well-being experiences the greatest fluctuations, and mental health challenges such as depression typically emerge. During this period, brain development is especially susceptible to social pressures, peer opinions, and peer comparison.

Frequent social media use may be associated with distinct changes in the developing brain in the amygdala (important for emotional learning and behaviour) and the prefrontal cortex (important for impulse control, emotional regulation, and moderating social behaviour), and could increase sensitivity to social rewards and punishments. An early exposure to technologies and social media in children interferes with cognitive development and emotional regulation.

Social media can provide a sense of connection for some. Yet some social media platforms show live depictions of self-harm acts like partial asphyxiation, leading to seizures, and cutting, leading to significant bleeding. Studies found that discussing or showing this content can normalize such behaviours, including through the formation of suicide pacts and posting of self-harm models for others to follow.

Nearly half of all adolescents aged 13–17 said social media makes them feel worse. Nearly 60 percent of adolescent girls say they’ve been contacted by a stranger on certain social media platforms in ways that make them feel uncomfortable. More than 1 in 10 adolescents have showed signs of problematic social media behavior, struggling to control their use and experiencing negative consequences.

The abuses and exploitation of children in the digital environment are a human rights issue, explicitly protected in the Convention on the Rights of the Child. A platform’s inability to protect children from viewing harmful content, such as information relating to suicide, self-harm and sexual content, which is occurring on platforms owned by Google and Meta, is a violation of the Convention.

Pointing fingers at victims and parents

Meta’s own internal studies under “Project MYST” found parental controls do not curb compulsive use. This study reportedly found that children who had experienced “adverse effects” were most likely to get addicted to Instagram, and that parents were powerless to stop the addiction. Mark Lanier, lawyer for the plaintiff in KGM v Meta et al. showed during the trial that Meta’s internal communications compared the platform’s effects to pushing drugs and gambling.

Pushing blame and responsibility on victims and parents looks to be an attempt by the platforms to ignore or deflect attention from the duty of care imposed by law on them to the benefit of their users. Breach of duty requires only that harm was caused negligently.

The internal documents disclosed in Meta’s litigation, alongside whistleblower testimony, now appear to be indicating that the platforms appreciated the risks and went ahead regardless of the consequences.

The defendants attempted to argue that KGM had pre-existing mental health problems that were not caused by the platform itself and that she consented to the terms of use.

One vital element that is often overlooked because of the “one-size-fits-all” nature of the service on offer is that children do not legally have the ability to provide consent. In most jurisdictions, the legal age of majority for entering contracts is 18. While those under 18 can sometimes enter contracts such as for necessities where they benefit, they cannot be held responsible for any debt they owe. Notices of use presented by platforms for “click here” consents by minors are hence irrelevant to the duty of care.

Duty of care is owed to all, and platforms cannot benefit from their victims’ vulnerabilities under tort law. As established in Koch v. US (2017), the platforms still bear full responsibility for the consequences of their wrongful acts. In any given population, some will be more resilient than others and some will be more likely to be harmed than others.

In addition, when it comes to discharging the duty of care, the person who is signing up to payment and who is the bill payer are usually the parents of the children affected. It is difficult to suggest that they have provided properly informed consent to their children being harmed if there are no warnings from social media about the effects of its use.

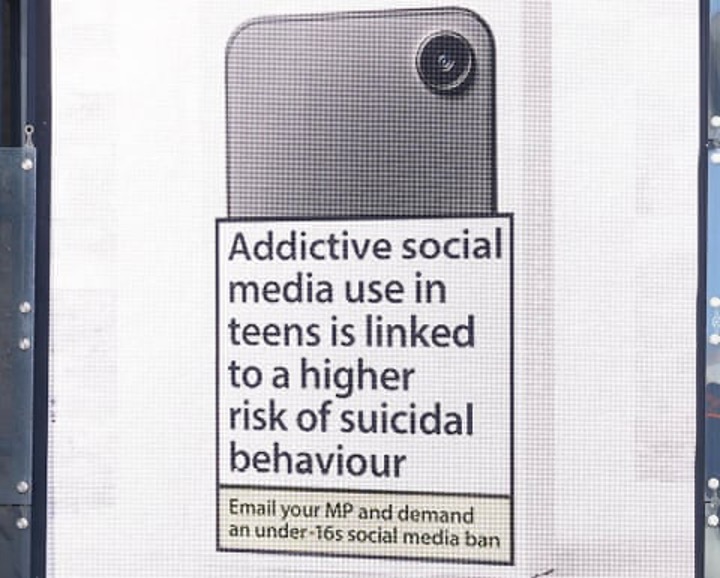

In the UK, groups are calling for properly informed consent and providing warnings to children and parents alike on the effects of using social media, including the impacts of the design of its algorithm. Mumsnet, a London-based internet forum, has called for an under-16s social media ban with a cigarette-style health warning, claiming that “three hours or more social media a day makes teens more likely to self-harm,” that teen phone addiction doubles the risk of anxiety, that social media use can increase the risk of eating disorders in young people and that addictive social media use in teens is linked to higher risk of suicidal behavior. Mumsnet has proposed packaging that would look like this:

A Mumsnet billboard ad, part of its Rage Against the Screen campaign. Photograph: David Parry/PA. The Guardian.

The irrelevant defense of “free speech”

It’s obvious that in most personal injury cases, “freedom of speech” is not a defence. In a car crash where someone was drunk driving and hits a pedestrian at a crossing, it would be odd for the driver of the car, when confronted with a civil suit for damages, to claim he was talking on the phone at the same time and hence exercising the right to free speech and that in some way that may have contributed to the lack of care an attention to driving, and should be excused.

While bogus as a defense, in the example above the point is at least relevant. It is clearly relevant to the level of care and attention the driver is paying to the pedestrian. In the context of social media, the right of the platform to exercise its right to express itself freely its substantively irrelevant to its duty of care or the discharge of that duty of care.

It has nevertheless been used as a procedural torpedo to prevent state-level cases and legislation from reaching their natural conclusion. Under U.S. law, any freedom-of-speech issue that is raised must be dealt with as a matter for the federal, not state courts. Constitutional matters cannot be decided by states, only by federal courts.

When state legislatures attempt to protect minors from the documented harms of social media, those efforts are frequently met with resistance from Big Tech–backed advocacy groups. NetChoice, for example, has repeatedly challenged child‑safety legislation on First Amendment grounds.

Representing the likes of Google, Meta, OpenAI, X, and TikTok, NetChoice claims it exists to keep the internet “safe for free enterprise and free expression.” In reality, it has used that mandate to prioritise corporate freedom, repeatedly challenging online child-safety laws under the First Amendment.

In NetChoice v. Yost (Ohio), the organization argued that Ohio’s Social Media Parental Notification Act violated free‑speech protections. The Act required minors under the age of 16 to obtain parental consent before creating a social media account. In April 2025, a U.S. District Court held the law unconstitutional and permanently enjoined its enforcement. The State appealed the decision, and as of February 4, 2026, the case is pending before the Sixth Circuit: in the meantime the harms continue while what is a procedural challenge finds its way (slowly) through the court system, as, presumably was the intent of those behind the Net Choice challenge.

Another tactic used by Big Tech is to rely on Section 230 of the Communications Decency Act, which was introduced as new entry protection by the Clinton administration in the 1990s to help fledgling internet businesses get off the ground. It provides limited federal immunity for online platforms that operate as mere conduits for others content, and prevents courts from treating them for liability that would otherwise be incurred as the “publishers or speaker” of content created by other users of the network. This provision has shielded companies such as Google and Meta from some civil claims arising from publisher liability for third-party content, limiting their accountability. KGM v Meta et al. simply took the old duties enshrined in the common law and applied them to new products and services, demonstrating that those duties apply to all in society.

While the free speech argument distracts from the harm caused and the duty of care of platforms at law, it misses the point of the wider public interest that are also at stake. In response to President Trump’s claims that Europeans are engaging in “censorship” when they require platforms to remove illegal content, Margaret Vesthager, former vice president of the European Commission, along with her colleagues, argued that “the accusation of censorship ignores what is at stake”. Vesthager, et al wrote:

There is a profound difference between regulating infrastructure and regulating speech. When we require platforms to be transparent about their algorithms, to assess risks to democracy and mental health, to remove clearly illegal content while notifying those affected, we are not censoring. We are insisting that companies with unprecedented power over public discourse operate with some measure of public accountability.

As we have set out above, the platforms digital systems “engage” with and affect everyone. They do that in ways that have been shown to be harmful, and which may breach their duty of care. Adults are allowed in our society to do dangerous things. But that does not discharge a duty of care on a platform that provides a dangerous product without explaining how its use can be harmful.

On any analysis a minor cannot give consent, let alone consent to being harmed.

Neither KGM. v Meta et al. nor New Mexico v Meta seek to ban social media for adults (or children) solely on the basis of its content, but rather the addictive design of a system that engages people in ways that ensure they feel deprived and need to keep checking if they do not know what is happening on their social media accounts.

Framed as a clash between freedom of expression and regulation, something simple is revealed: Big Tech’s corporate speech is being protected, overriding state laws designed to safeguard children. Combined with First Amendment claims, this tactic reflects a broader strategy by Big Tech: exploiting legal loopholes and procedural tactics to evade accountability, at the expense of children’s safety and democratic state action.

No lesser standard for social media

In sectors like toys, transport, and medicine, the United States and many other countries worldwide take a safety-first approach; products must meet safety thresholds before reaching consumers, with protections in place until proven safe.

Even in America, the tide appears to be turning. At a Senate Commerce hearing in January, Texas Senator Ted Cruz said, “Big Tech loves to use grand eloquent phrases about bringing people together. But the simple reality and why so many Americans distrust Big Tech is you make money the more people are on your product, the more people are engaged in viewing content, even if that is harmful to them.”

Communications devices have only recently become social media platforms that mine people for data. Harm to children is often difficult to know about as they have nothing to compare their experience to; in the world of children, barrages of harmful online content could be “normal.” It is perhaps understandable that the harms being suffered have not been known or understood by the grown-ups.

Now we know.

The tech sector is responsible for 15 percent of global GDP, with TikTok, Meta, Apple and Alphabet among the most valuable and profitable companies in the world. They can afford to take more care, and they should. When it comes to precautionary standards, social media should not be subject to a lesser standard than other industries in our economy.