Back in February, Rob Manfred, the commissioner of Major League Baseball (MLB), sang a tune that is truly a classic in the history of labor relations in baseball. According to ESPN, Manfred noted that fans are sending emails expressing concern over the sport’s lack of a salary cap, purportedly spurred by an offseason spending spree by the Los Angeles Dodgers, a team that has won its division eleven times in twelve years. Manfred insisted that:

This is an issue that we need to be vigilant on. We need to pay attention to it and need to determine whether there are things that can be done to allay those kinds of concerns and make sure we have a competitive and healthy game going forward.

The NBA adopted a cap on payrolls (i.e., a salary cap) in 1983. Soon after, caps were instituted in the NFL, NHL, and WNBA. Despite the consistent efforts of baseball owners in the last years of the 20th century, MLB players have consistently resisted the establishment of any cap on payroll.

Back in the 20th century, this conflict over salary controls led to a number of player strikes and owner lockouts. The last of these labor disputes began during the 1994 season. This strike led to the cancellation of the 1994 World Series and the postponement of the start of the 1995 season. Despite inflicting these losses, the strike didn’t lead to any cap on team payrolls.

For the most part, calls for a cap seem to have subsided in the 21st century. But in 2024, the Los Angeles Dodgers, with a payroll of $265.9 million, won the World Series. In the offseason, the Dodgers added about $65 million more to their payroll, and now lead all of baseball in spending on players (in 2024, they ranked third). Because some seem to think that spending and wins are highly correlated in baseball, it might have appeared to some that the Dodgers were trying to buy another title. And apparently this led some fans to email Rob Manfred.

We don’t know how many e-mails Manfred actually got calling for a salary cap. We do know that it is a myth that baseball teams can buy championships in baseball. Back in 2006, we devoted an entire chapter in The Wages of Wins to the question “Can You Buy the Fan’s Love?” The chapter details all the reasons we thought baseball teams can’t simply buy wins and championships. For now, I’ll simply repeat the observation that from 1988 to 2006, only 18.1% of the variation in a team’s winning percentage could be explained by that team’s relative payroll (i.e. team payroll divided by average payroll that season). That leaves roughly 82% of the variation in winning percentage to be explained by factors other than what teams spent on players. In simple words, teams cannot simply buy wins!

This analysis was repeated from 2011 to 2024. Across these 14 seasons, only 13% of the variation of winning percentage could be explained by relative payroll.

So if spending can’t fully explain wins, can it explain championships? Turns out buying a title is even harder. From 2011 to 2024, none of the ten teams with the highest relative payroll even made it to the World Series. Yes, the highest spending teams in baseball didn’t even get a chance to lose in the World Series!

At the All-Star break in 2025, we seem to be seeing the same story. The top team in baseball in terms of winning percentage is the Detroit Tigers. The Dodgers rank second, but essentially are not much better than five or six other teams. Some of those teams, like the New York Mets, also have a very high payroll. The Milwaukee Brewers, with an impressive record of 16 games over .500, pay their players less than the Tigers.

How can the Tigers and Brewers compete with the Dodgers and Mets? It turns out that baseball effectively has two different labor markets. The Dodgers and Mets generally find their best players in the free agent market. To be in free agency, a player must complete six years of MLB service. Once a player’s career reaches that point and they are without a contract, they can sell their services to the highest bidder.

Back in 2016, the Detroit Tigers played in that market. But when the Tiger’s owner Mike Ilitch passed away in 2017, his son (Christopher) decided the Tigers would get out of the free agent market and try and find their best players in the draft. Hence, most of the Tigers today have less than six years of service. As the Tigers have shown this year, such players can be quite good. And relative to the players on the Dodgers, they are also quite cheap.

Of course, the Dodgers have also built a competitive team. And maybe the Dodgers do win the title in 2025. But at the All-Star break it seems clear that title is not guaranteed. So, why won’t all that spending ensure a Dodger repeat?

Let’s start with the obvious reason. Baseball is a game where you hit a round ball with a round stick. There is no hitter in baseball that a pitcher can’t get out. And there is no pitcher in baseball that a hitter can’t hit. The game simply has a large random element. In addition to random variation in performance, there is also no way to predict injuries. The injury issue is especially relevant in the free agent market, as many players are on the downside of their career after six years of playing.

Beyond the randomness of performance is the simple fact that the difference in playing talent has shrunk considerably across time. We can see this if we look at the level of competitive balance in baseball. As noted in The Wages of Wins, competitive balance improved dramatically in the second half of the 20th century as the talent pool got much bigger. Specifically, as teams started employing African Americans and then players from all over the world, the supply of very talented players increased. Consequently, more teams had access to very good players.

One can see this simply by looking at how often teams win more than two-thirds of their games. Since 1901 this has happened just 30 times. Of these, only three instances happened in the 21st century. It also happened six times in the second half of the 20th century. That means that prior to 1950, this happened 21 times (equal to 30 less three less six). Once upon a time, it was truly possible to build a baseball team that dominated the game. This happens when your team has lots of great players and other teams… well, they don’t!

Of course, that is just dominance in the regular season. As the Seattle Mariners learned in 2001, dominating the regular season doesn’t guarantee post-season happiness. After winning 116 games in 2001—tied for the most wins in baseball history—the Mariners were eliminated in the American League championship series.

At that time, eight teams participated in the playoffs. Today that number has grown to twelve. Because playoff teams are often not much different, the odds of any playoff team winning the World Series is probably less than 10%. And that is true regardless of how much money you spend. Player performance from week-to-week is simply not that predictable. If your star hitters or pitchers (or both) have a bad week in October, your fans will end the season sad.

All of this means the Dodgers simply can’t buy a title. So, why do owners want a salary cap? The spending by teams like the Dodgers does bid up the cost of free agents. If the league could cap spending, players would generally be cheaper. And that would transfer millions of dollars back to the owners.

Yes, none of this is about improving competitive balance and making the game better. In fact, as we noted in The Wages of Wins, there isn’t even much evidence fans truly want competitive balance. Extensive studies of consumer demand and competitive balance tell that story. And every baseball fan learned that lesson when the Texas Rangers played the Arizona Diamondbacks in the 2023 World Series. Fans of small market teams (i.e. not on the coasts) being competitive got what they wanted that year. But it turns out, few other people cared to watch.

In the end, the call for a salary cap has nothing to do with making the game more popular. Owners have consistently called for a cap on pay for the obvious reason they want to pay their workers less. And gullible fans (and members of the media) are often quite happy to help them achieve their dream.

But if baseball does achieve a cap on pay after 2026, you are not going to see balance in baseball improve dramatically. And you won’t see more fans in the stands or watching on television. What you will see is more owners counting more dollars.

Once again, we said all this twenty years ago in The Wages of Wins. Yes, sometimes it is fun to hear the classics!

Since the launch of ChatGPT back in November of 2022, what was once a concept confined to Sci-Fi novels has now certifiably hit the mainstream. The highly visible advances in artificial intelligence (AI) over the past few years have either been awe-inspiring or dread-inducing depending on your perspective, your occupation, and maybe how much Nvidia stock you owned before 2023. Many white-collar workers now fear that they may face the same job-displacing effects of automation that has plagued their blue-collar peers over the past several decades.

Nevertheless, at least one powerful constituency is absolutely thrilled with the rise of AI and is betting big on its success: Big Tech. Microsoft, currently the second most valuable company in the world with a mind-boggling $3.7 trillion market cap, is a leading AI zealot. This fiscal year alone, Microsoft plans to invest over $80 billion in AI-related projects.

As one of its big selling pitches to investors and consumers, Microsoft argues that AI has prompted massive efficiency gains internally, including eliminating a staggering 36,000 workers since 2023. Microsoft CEO Satya Nadella estimated that as much as 30 percent of the company’s code is now written by AI. Mr. Nadella, of course, has a lot riding on convincing shareholders and consumers that AI is a big deal. So, to what extent this claim is legitimate, or pure marketing fantasy, is uncertain. A recent working paper authored by Microsoft researchers and academics analyzes the productivity increases in (non-terminated) software developers who use AI tools. The authors find that developers using AI tools saw an average 26 percent increase in their productivity. If such experimental results generalize to the broader labor market, AI will certainly have a dramatic impact. Despite evident benefits towards companies from this productivity boon whether workers themselves stand to gain remains uncertain.

A Look into Software Developers’ Compensation

AI models capable of assisting with writing and coding tasks have existed for a couple of years now. Taking Mr. Nadella’s statements at face value, such models enjoy widespread utilization by developers and coders working for Big Tech. As such, if workers—and not just their employers—stand to benefit from AI, then worker compensation should reflect at least some evidence of these productivity gains.

Simple economic models of the labor market suggest that a technology that boosts the marginal productivity of labor will cause a concomitant increase in worker pay. After all, in competitive labor markets, workers should capture 100 percent of their marginal revenue product (MRP), which increases with productivity, though such an outcome rests upon a strong and often-violated assumption that the relevant labor market is perfectly competitive. When an employer has buying power, it can drive a wedge between the worker’s MRP and her wage. In lay terms, this means the employer can appropriate value created by the worker without sharing in the gains, the Pigouvian definition of exploitation. Thus, the extent to which workers benefit from this AI-induced productivity remains unclear. (In addition, a monopsony reduces employment relative to a competitive labor market; Microsoft’s mass firings since its acquisition of Activision in 2023 is also consistent with the exercise of monopsony power.)

While a recent article in The Economist highlights how the AI boom has led to some “superstar coders” seeing their “pay [] going ballistic,” this subset of workers represents a tiny sliver of the total labor market of developers. In that same article, The Economist also produced a graph showing a dramatic slowdown in hiring—job postings for software developers have dropped by more than two-thirds since the beginning of 2022. To understand how AI is affecting workers, we need to look at the labor market at large. Unfortunately, our analysis suggests that software developers have not yet benefited (and may never fully benefit) from their increase in productivity.

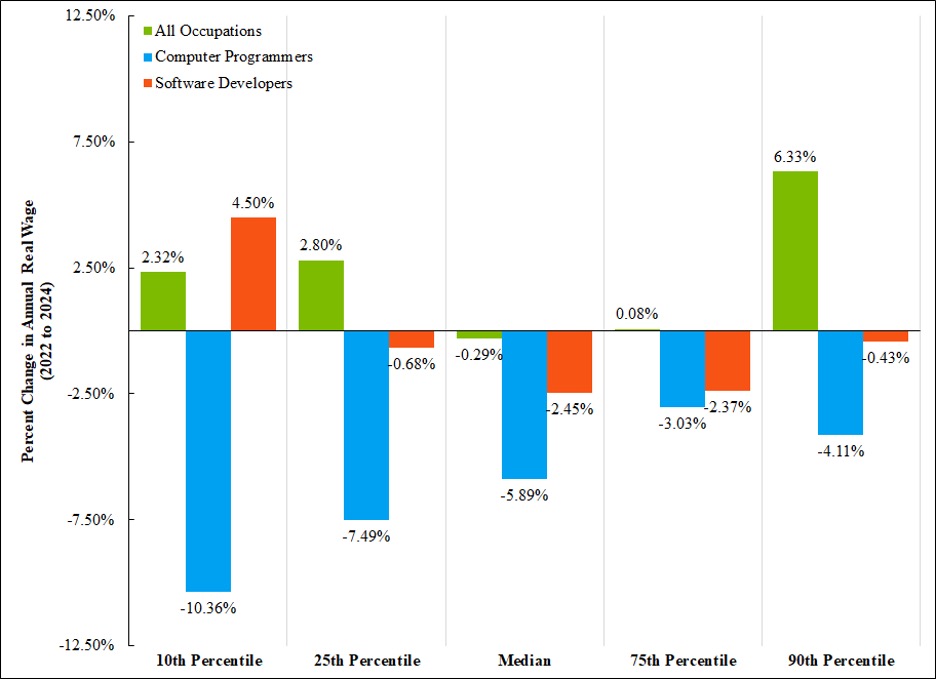

Figure 1 below takes the broadest look at how all software developers and computer programmers in the United States have (or have not) benefited from the rise in AI. The results are not pretty: While 2022 inflation has hit all workers hard, eroding much of their nominal wage increases, both computer programmers and software developers are faring much worse than the average worker. Per the BLS, the median wage of computer programmers decreased by 5.89 percent between 2022 and 2024.

Figure 1: Real Wages Are Flat for Most Workers, But Have Declined for Programmers and Developers

Source: Bureau of Labor Statistics’ Occupational Employment and Wage Statistics Annual Report; CPI sourced from FRED. Notes: We transformed this nominal data using CPI to be in 2024 dollars. Hence, this chart shows the real change in wages between 2022 to 2024 (i.e., accounting for inflation). 2024 is the most recent data release, and the 2024 data are not inclusive of data from Colorado.

Not even the top ten percent of software developers, including the “superstar coders” as dubbed by The Economist, appear to be thriving. Figure 1 also shows that the highest paid computer programmers (90th percentile) saw their real wages fall by 4.11 percent.

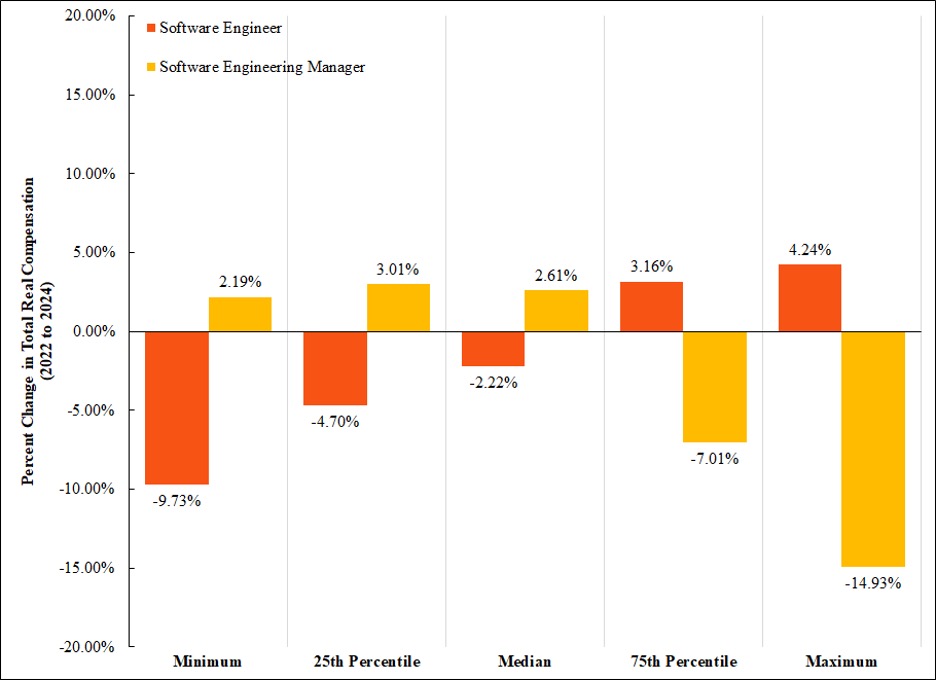

Workers for Big Tech fared no better. Indeed, the percentage change in the median compensation for software engineers employed by Big Tech effectively mirrors that reported in Figure 1—the median software engineer saw a 2.22 percent decrease in their real wages from 2022 to 2024 per data from Levels.fyi.

Figure 2: Software Engineers Working Big Tech Also Have Not Seen a Dramatic Rise in Wages

Source: Levels.fyi 2024 and 2023 year-end reports; CPI sourced from FRED. Notes: Levels.fyi collects self-reported data “for the top paying tech companies and locations.” Total compensation is inclusive of base salaries, stock grants, and bonuses. Note that Levels.fyi’s trend table has slightly different median compensation estimates than the box charts that we source our data from. It is unclear what causes this discrepancy. We transformed this nominal data using CPI to be in 2024 dollars. Hence, this chart shows the real change in total compensation between 2022 to 2024 (i.e., accounting for inflation).

At the very least, we see evidence that software engineering managers (depicted in yellow) have seen their compensation rise (by 2.61 percent), though nowhere near their supposed AI-powered productivity increase.

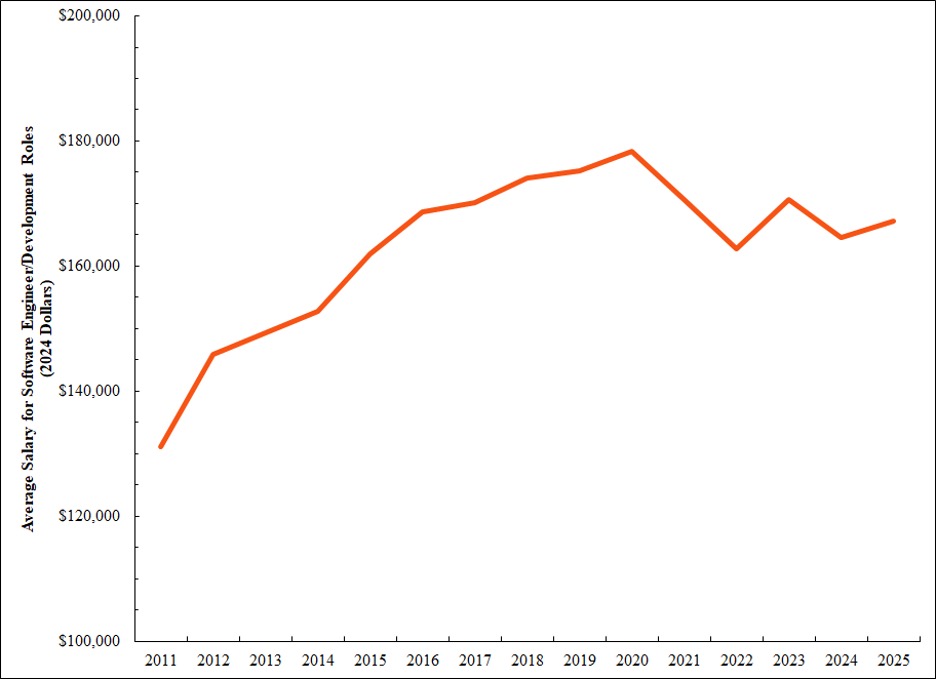

Microsoft-specific wage data were not easily accessible. The Economist reported that the median pay for software developers at “tech giants including Alphabet, Microsoft and, until recently, Meta” was close to $300,000. Lucky for us, however, Microsoft sponsors thousands of H-1B visas, which provides a source of publicly available salary data. Using these data, we can get a sense of the trend in how Microsoft software engineer compensation has evolved over time. Because they are beholden to their American employer, H-1B visa-holders likely earn wages below their American counterparts. Nevertheless, the trajectory of wages of H-1B workers should roughly track the trajectory of wages of their American peers.

Figure 3: H-1B Data Suggest That Microsoft Software Engineers’ Real Wage Stagnated in the 2020s

Source: Data is from H1B Grader.com which states that “salaries data is extracted from the H1B Labor Condition Applications (LCAs) filed with the US Department of Labor by [the] Microsoft Corporation.”Notes: We combined various positions’ pay information to produce this average salary measure. Positions that were consolidated had titles that indicated they were roles in software engineering or development. We explicitly excluded IT-specific roles.

While H-1B software engineers working at Microsoft saw real wage increases during the 2010s, by the 2020s, real wages appear to have stagnated. This trajectory likely reflects the trend for all Microsoft developers, including domestic workers.

While these figures are by no means perfect, if workers truly reaped benefits from their AI-boosted productivity in a significant way, the above charts should have reflected such an outcome. Unfortunately, from what we can see, wages have not captured much of AI’s productivity impact. This lends credence to the hypothesis of monopsony exploitation restraining wage growth—in other words, Microsoft (the employer) is appropriating the productivity gains of its workers, presumably because the workers do not have credible outside employment options to which they could turn easily in response to a wage cut.

Software Developers Face an Uncertain Future

Unfortunately, not only do software developers not receive boosts in their compensation commensurate with their productivity increases, but many also now risk losing their jobs. As noted above, Microsoft has shed 36,000 jobs since 2023.

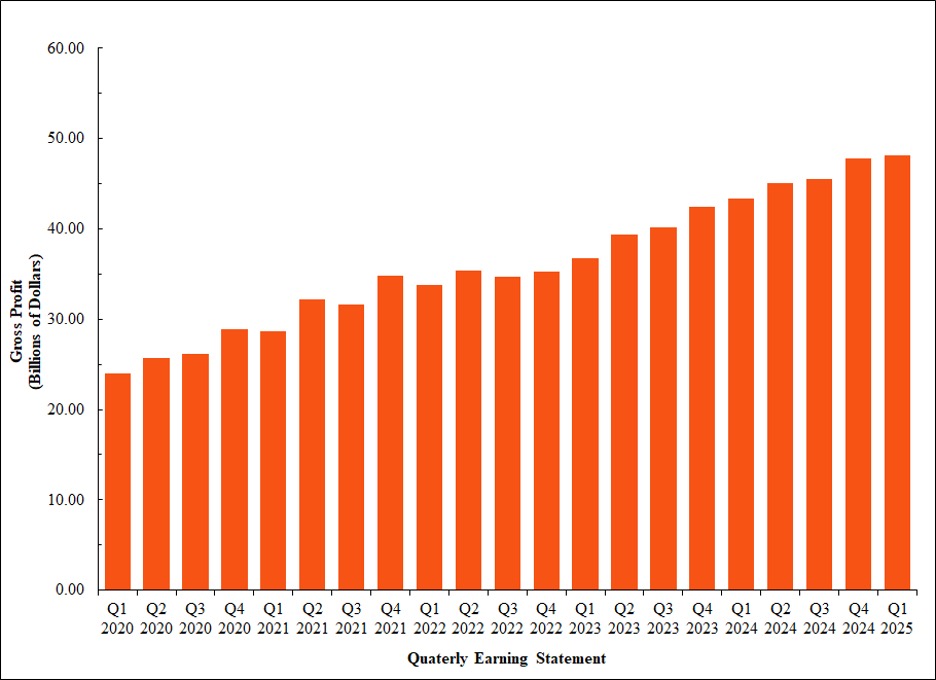

The cause of these mass layoffs does not appear to lie with any underperformance on Microsoft’s part. On the contrary, Microsoft’s gross profits have continued to rise over the past few years, as seen in Figure 4 below.

Figure 4: Microsoft Has Seen Significant Profit Growth in the Past Five Years

Source: MacroTrends.net.

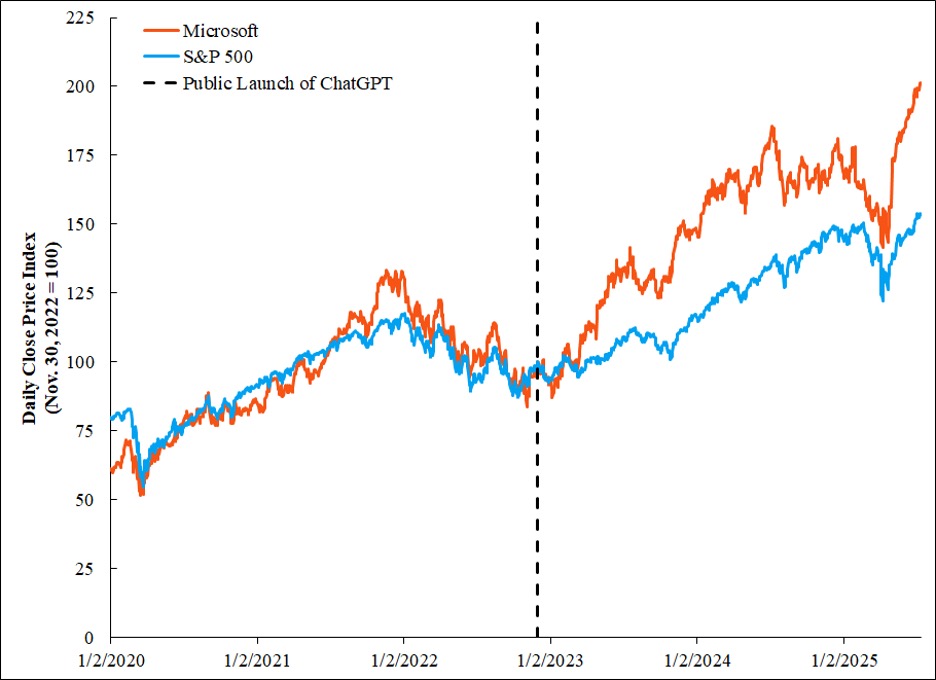

Microsoft stock has also performed tremendously since the release of ChatGPT. If anyone is benefiting from the increased productivity of its workers, it appears it is Microsoft itself. (To be fair, given that Big Tech workers’ compensation packages often include stock, they too benefit from the AI rally even if the compensation figures reviewed above may not reflect such increases.) The combination of layoffs and no real impact on pay appears to at least suggest that AI will function as a substitute, rather than a complement, to human labor.

Figure 5: Microsoft Stock Has Performed Well in the Age of AI

Source: Data retrieved using getsymbols package in Stata, sourced from Yahoo! Finance. Notes: As is standard, we used the adjusted closing stock price. Data is from Jan. 2, 2020 to July 11, 2025. Closing price indexed such that November 30, 2022 equals 100 (notable for being the date OpenAI first publicly released a demo of ChatGPT, which would go on to reach a million users in less than a week).

AI Fits a Trend of Growing Productivity and Wage Stagnation

Whether AI will truly revolutionize the workplace and make many human workers “go the way of the horses” remains to be seen. From what we have analyzed, however, even if AI does not replace human labor, workers should not put too much hope that they will reap the rewards of their increased productivity. AI continues a trend that started back in the 1980s: the divergence between worker’s productivity growth and their wages. Without a significant policy intervention in labor markets, such as a federal job guarantee or unionization to countervail monopsony power, AI may be a technology that continues to exacerbate the inequality of the 21st century.

On Thanksgiving Day in 1971, the number one ranked Nebraska Cornhuskers faced the second ranked Oklahoma Sooners in a game that is today known as “The Game of the Century.” On that day, Nebraska proved victorious over its archrival and secured the Big Eight title. Across the years, these two teams frequently met in November and frequently that game decided a conference title. Consequently, this rivalry goes far beyond one game in 1971. The rivalry between Nebraska and Oklahoma was arguably one of the most important rivalries in the history of college football.

Or so it was until conference realignment.

Today a game between these two teams wouldn’t mean much at all. In 2011, the University of Nebraska left the Big 12 for the Big Ten. And next year, Oklahoma will leave the Big 12 for the SEC. Although Nebraska and Oklahoma may play each other in this century, it is a safe bet the “Game of the 21st Century” will not be between Nebraska and Oklahoma. The desire to play in bigger and more financially successful conferences effectively killed this rivalry.

For many people, Nebraska and Oklahoma fleeing the Big 12 is part of the inevitable decline in college football. This alleged decline has been hastened by the implosion of the Pac-12 in recent weeks. But it is important to put these moves in some perspective.

According to the Department of Education, in 2010—the last year Nebraska played in the Big 12—the football team reported about $55 million in revenues. In 2019, Nebraska’s revenues reached $108 million. Adjusted for inflation, its football revenues increased by over 50 percent in just ten years.

A similar story can be told about Oklahoma. In 2010, Oklahoma reported $58 million in football revenue. In 2019, that number had increased to $115 million. Again, adjusted for inflation, this is about a 50 percent increase in revenue in one decade.

Yes, ending the rivalry likely disappointed some fans. But in terms of revenue, both teams did quite well after Nebraska left the Big 12. And that was true despite the fact Nebraska has fallen from the ranks of dominant college football powers.

From a business perspective, neither the University of Nebraska nor the University of Oklahoma appear to be impacted much by what has transpired since the Cornhuskers left the Big 12. And that story is consistent with what we see in general in college football. According to the Department of Education, the average team in the Football Bowl Subdivision (formerly known as Division I-A) has seen its revenues grow from $22.4 million in 2010 to over $40 million in 2021. Adjusted for inflation, that’s about a 44 percent increase. In sum, college football has attracted substantial audiences since the 19th century and continues to do just fine!

It is important, though, that we put that business in some sort of perspective. The University of Nebraska Lincoln reports that the total revenue for the school in 2023-24 was about $1.5 billion. And if we look at the entire University of Nebraska system (they have multiple campuses), the reported revenue is $3.3 billion. Yes, that’s billion with a “b”!!

It’s possible that when people around the nation think about the University of Nebraska, they think about their football team. But athletics are a tiny part of the business of the University of Nebraska. And that is essentially the story wherever you look at higher education. Yes, people may know more about the exploits of a school’s athletics than they do about the accomplishments of a school’s economics professors, but academics—as was argued by Charles Davidson of the Federal Reserve Bank of Atlanta—remains the primary business of colleges and universities in this country.

So college football can be thought of as a thriving but relatively small business. Some fans of your school may live and die with the exploits of their favorite team on Saturday afternoon. But the university continues regardless of what is on the scoreboard.

Growing Revenues Mask a Larger Problem

The thriving nature of college football might suggest that there are no problems. Unfortunately, that’s definitely not true. In fact, it has never been true. College football has a problem. In fact, all of college athletics has a problem. And this problem has always needed to be fixed.

Back in the 19th century, a decision was made at American universities that tickets would be sold at athletic contests involving university students. Soon after, those contests became a thriving small business. By the 1880s, thousands of fans were showing up to watch college football and those fans gave the schools hosting the games thousands of dollars.

The schools decided that all those dollars were not going to be shared with the students the fans were watching. Athletes in these contests were labeled “amateurs,” a word that came to mean “you ain’t getting paid.”

Okay, there was some payment. Universities often agreed to give the students a scholarship to the school. But the pay to the athletes in college sports was tightly controlled by the universities.

In economics we have a word for such a system. The word is “monopsony.” More specifically, an employer has monopsony power when they have substantial power to set the wages of their employees. For more than a century, colleges and universities have made millions of dollars from the students playing the games we watch. And the monopsony power of the college and universities allows them to greatly restrict how much compensation the athletes generating these dollars get to receive.

At least, that is the story we were told. For years we suspected that many athletes were receiving additional benefits from boosters of athletic programs. Such benefits violated the rules of college sports. Nevertheless, schools that wanted to employ specific individuals would use boosters to help recruit that talent.

Now this has all changed. The efforts boosters had made to recruit talent under the table in the past can now be made in the full light of day. Starting in 2021, college athletes could be compensated for their Name, Image, and Likeness (NIL). And this means, a booster can now just give money to the athletes they want to see compete for their favorite team.

Of course, this is not the intent of NIL deals. An NIL deal is supposedly about an athlete being hired to do something like pitch a product. Certainly, such deals are being made. And it certainly makes sense that an athlete whose NIL qualities are worth something in the marketplace should be compensated. To use a person’s NIL without paying that individual is, as the courts have ruled, very, very wrong.

Right?

Well, there’s one obvious exception. Consider this scenario. An athlete—with a universities’ name clearly advertised on their uniform—is featured on a highlight on ESPN. Just like an athlete advertising Wendy’s, that highlight advertises the school. And that athlete’s NIL is clearly part of that advertisement. But it seems few people think that athlete is entitled to any compensation for appearing in this highlight. Certainly, the universities don’t think so. Once again, universities have monopsonistic power and they have decided to restrict the payment of athletes to the cost of attendance.

Of course, college athletics aren’t just about advertising a university. College athletics also directly generate revenue for the school. For example, the University of Oklahoma reported in 2021 to the Department of Education that its athletic teams generated $157 million in revenue. The athletic program had 596 participants. Imagine the Sooners did what professional sports in North America generally do and gave 50% of their revenue to their players. If they did this, the 596 athletes would split nearly $80 million. Or to put it another way, each athlete would get more than $260,000 to play for the Sooners. Yes, an education at the University of Oklahoma is worth quite a bit. But it is not worth $260,000 per year.

Should the schools split the revenues equally across all athletic teams? One could argue that teams and players that generate more revenue on the field should be paid more. For example, consider a study of the men’s basketball team at Duke University in 2014-15. For that season, Duke University told the Department of Education that the men’s basketball team generated $33.7 million in revenue. If half of that revenue went to the players, then those players would receive about $16.9 million. Spread equally across 15 basketball players on the roster, that would come to $1.1 million per year. Alternatively, if that $16.9 million was allocated in terms of on-court productivity, Jahlil Okafor would have received $4.1 million for the one season he spent with the Blue Devils. It was estimated four more players were each worth more than one million dollars.

To put it simply, if the men’s basketball team at Duke University operated in the same market we see in the NBA, many of their players would be paid millions of dollars. The fact they were not means they are very much exploited.

Exploitation Is Not Just a Man’s Game

One might think that we would only find such exploitation with respect to college football and men’s basketball. A similar study found, however, that women in college basketball also could generate more revenue than what they were paid by their school. The same was found with respect to some athletes in college softball (study forthcoming at the Journal of Sports Economics) and women’s gymnastics (study presented at the Western Economic Association). In sum, exploitation is not simply a man’s game in college sports.

Of course, because revenues are currently much higher in college football and men’s college basketball, the wages we would see in a competitive labor market would be predicted to be much higher in these two men’s sports than what we might see in women’s college sports. It is important to understand why those differences exist. Title IX became law in 1972. Prior to this law, colleges and universities were under no obligation to offer and invest in women’s sports. Due to the prevailing discrimination, women’s college sports very much lagged behind men’s college sports.

After Title IX became law, the investment gap between men and women’s college sports closed. But as the USA Today’s investigation into Title IX revealed, the gap most certainly didn’t vanish. Consequently, it is reasonable to infer that the revenue gap we see in college sports is really about discrimination. And that means, there is a good argument to be made that athletic revenues should be evenly distributed across men and women’s sports. Or as the economist Stefan Szymanski put it in a discussion of U.S. Soccer, there is a good case to be made that men’s sports should pay reparations to women’s sports to overcome decades of discrimination.

However you think the revenue should be distributed among the athletes in college sports, one issue should be clear: The current system, which dramatically limits the compensation athletes receive for the revenue they directly generate for their schools, is wrong. The revenues earned by colleges and universities come from the efforts of the employees at these institutions. Currently these institutions pay the university administrators, faculty, staff and coaches wages that are negotiated in a labor market. Like these people, college athletes are employees and they should also be paid for their efforts in a labor market that isn’t controlled by the monopsony power of the NCAA.

Countering the Arguments Against Payments

Not surprisingly, not everyone likes this idea. More specifically, those who benefit from the NCAA’s monopsony power and others who simply don’t understand the economics of college sports often raise the following objections to this plan.

- Football and men’s basketball pay for all the other sports. If millions must be paid to athletes in these sports, that money won’t be available for every other sport. Rod Fort and Jason Winfree demonstrated over a decade ago that, per the numbers released by football and men’s basketball teams, this is simply not true. In the book “15 Sports Myths and Why They’re Wrong,” these two economists noted the gap between reported revenue and expenses for these men’s teams wasn’t generally large enough to support the remaining sports. In other words, most of the revenue generated by most teams was often just being spent by these specific teams.

- Most college sports teams don’t make a profit. So, there is no money to pay the players. When we look at the revenues and expense data for college sports teams, most don’t report a “profit.” Of course, colleges and universities are often “non-profit.” That means there is no “owner” to claim any excess revenues. Or to put it differently, non-profits tend to put all excess revenues back into the program. Consequently, we should not expect to see a “profit.” They are called “non-profits” for a reason!

- If college players have to be paid, then only a few college sports teams will exist. Schools with less revenue will simply shut down their athletic programs. It is not hard to see that this story can’t be true. For most people, college sports are what we see at programs like the University of Nebraska and the University of Oklahoma. But most college sports teams exist at much smaller institutions, where revenues clearly are much smaller. Despite a lack of direct revenue, my school, Southern Utah University, still offers a collection of college sports teams. Again, sports have been found to be a good way to advertise your institution. The Salt Lake City Tribune is far more likely to report on the exploits of the Southern Utah University football or gymnastics team than they are to report on my latest book or article. I know that sounds crazy. But it’s true!

- If schools start paying college athletes, then college sports become a minor league sports business. No one wants to see that happen. There is a sense that if players are paid a wage and also given the right to choose their employer (via the frequent use of the transfer portal), then the college sports will lose their appeal. That seems unlikely. We saw this story in Major League Baseball in the 1970s, when players were given the right to free agency and salaries skyrocketed. Fans didn’t lose interest because ultimately fans want to see their favorite team succeed. If a fan’s college football team wins with a collection of players the school just hired, fans will be thrilled. The University of Colorado this year clearly illustrates that point!

Unfortunately, this is likely just a sample of the many objections people have to treating college athletes like employees. Those who object to this idea seem quite adept at predicting the payment of college athletics would have catastrophic consequences!!

But the current system we have has already resulted in catastrophic consequences for more than a century. The monopsony power of college and universities has resulted in millions of dollars being transferred from the workers (i.e., athletes) who generate much of this revenue to other people employed by these institutions (i.e., coaches and other administrators).

We are now moving to a system where athletes can get paid by boosters. Of course, that can’t be thought of as a good system either. More specifically, why would any institution think it is a good idea to have the payment to their workers controlled by individuals and groups not associated with their institution?

At this point it should be obvious there is a better way. College sports has done quite well for over a century, and the coaches and administrators associated with these programs have enjoyed most of the benefits from these programs. It is time to end the monopsony power of the NCAA and start treating college athletes like any other college employee.

That doesn’t mean replacing the NCAA’s current system that restricts compensation with another system that controls athlete compensation. What I am advocating is replacing the current system of monopsonistic control with a system where college athletes are treated like any other employee. In other words, we should bring the free labor market to college sports. Yes, that will mean coaches and administrators will likely end up with less. But the people we are watching play the games should be fully compensated for their efforts promoting the institutions that hired them.

David Berri is a professor of economics at Southern Utah University, lead author of the books Wages of Wins and Stumbling on Wins, and author of the textbook Sports Economics.